I’ve been digging into Mira Network and the $MIRA token lately, not from a price-chart perspective, but from a how-does-this-actually-work angle. I’m trying to understand the architecture, the logic, and where the token fits into the machine.

I researched almost for an hour, and one thing become very clear....

AI is moving fast. But trust? That’s struggling to keep up.

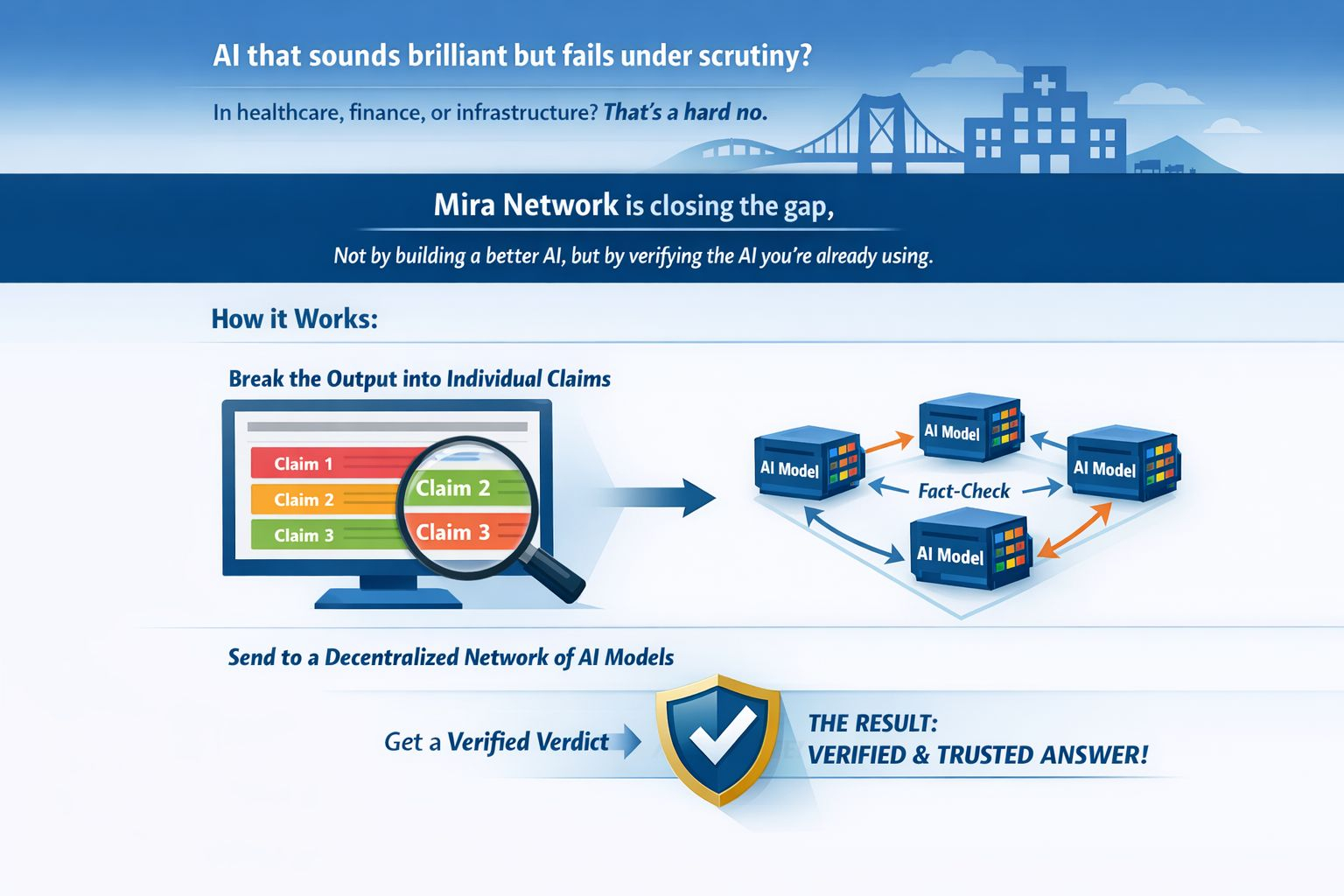

We have all seen it. AI models that sound brilliant but fall apart under scrutiny. Hallucinations, bias, confident wrong answers. In a chatbot? Annoying but manageable. In healthcare, finance, or infrastructure sectors? That’s a hard no.

That’s the gap #Mira Network is trying to close. Not by building a better AI, but by building a layer that verifies the AI you’re already using.

The concept is simple in theory, but ambitious in execution:

Instead of trusting one model’s output, Mira breaks that output into individual claims. Those claims get passed around a decentralized network of AI models—each one effectively fact-checking the others. The result isn’t just an answer. It’s a verdict.

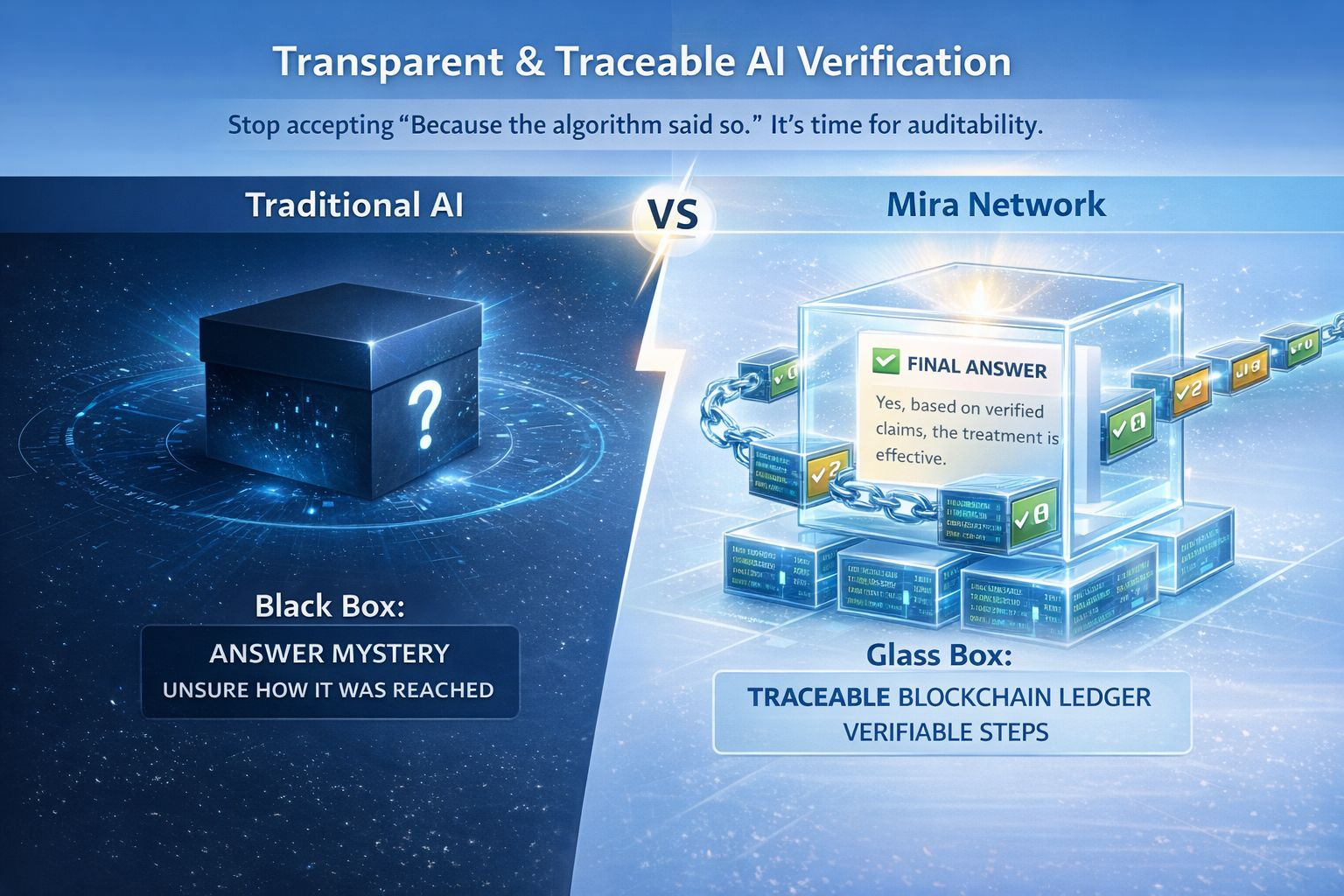

What makes this interesting to me is the transparency piece.

Every validation step is recorded on-chain. So if you’re building on top of Mira, you’re not just getting an output—you’re getting a traceable path of how that output was reached. In a world where “the algorithm said so” is no longer a good enough excuse, that kind of auditability starts to matter.

Then there’s the neutrality factor.

Mira isn’t tied to one model provider. It’s model-agnostic by design. That means OpenAI models can validate outputs from open-source ones, and vice versa. It creates a kind of cross-examination dynamic that, in theory, makes the whole system more robust.

But let’s be honest—this doesn’t come without questions.

How do you scale that kind of verification without bottlenecks? How do you design incentives so validators play fair, not fast? And how does governance evolve when the rules of verification need to change?

These aren’t dealbreakers. They’re just the hard part of building something that actually matters.

What Mira is really doing is shifting the conversation. Not from “how smart is this AI?” but “can we actually trust it?”

And if verification becomes the price of entry for real-world AI deployment, networks like this might not just be

useful—they might be unavoidable.

@Mira - Trust Layer of AI #Mira $MIRA