Among the misjudgments that are currently being made in AI is the idea that higher quality answers will automatically lead to increased trust. They don’t. It is possible to express a response in a certain articulate, structured and convincing way, yet be wrong. Polish is not what builds trust in the first place. It’s process. That is why formal verification processes are becoming one of the most significant changes in the AI infrastructure.

Looking over the verification workflow behind Mira, it is not only the technology which is outstanding but also the system discipline. Trust is not considered an abstract promise. It’s engineered step by step. Between the time when content is entered and the time when a cryptographic certificate is delivered, the whole process is oriented to change AI output into something that is measurable and defensible.

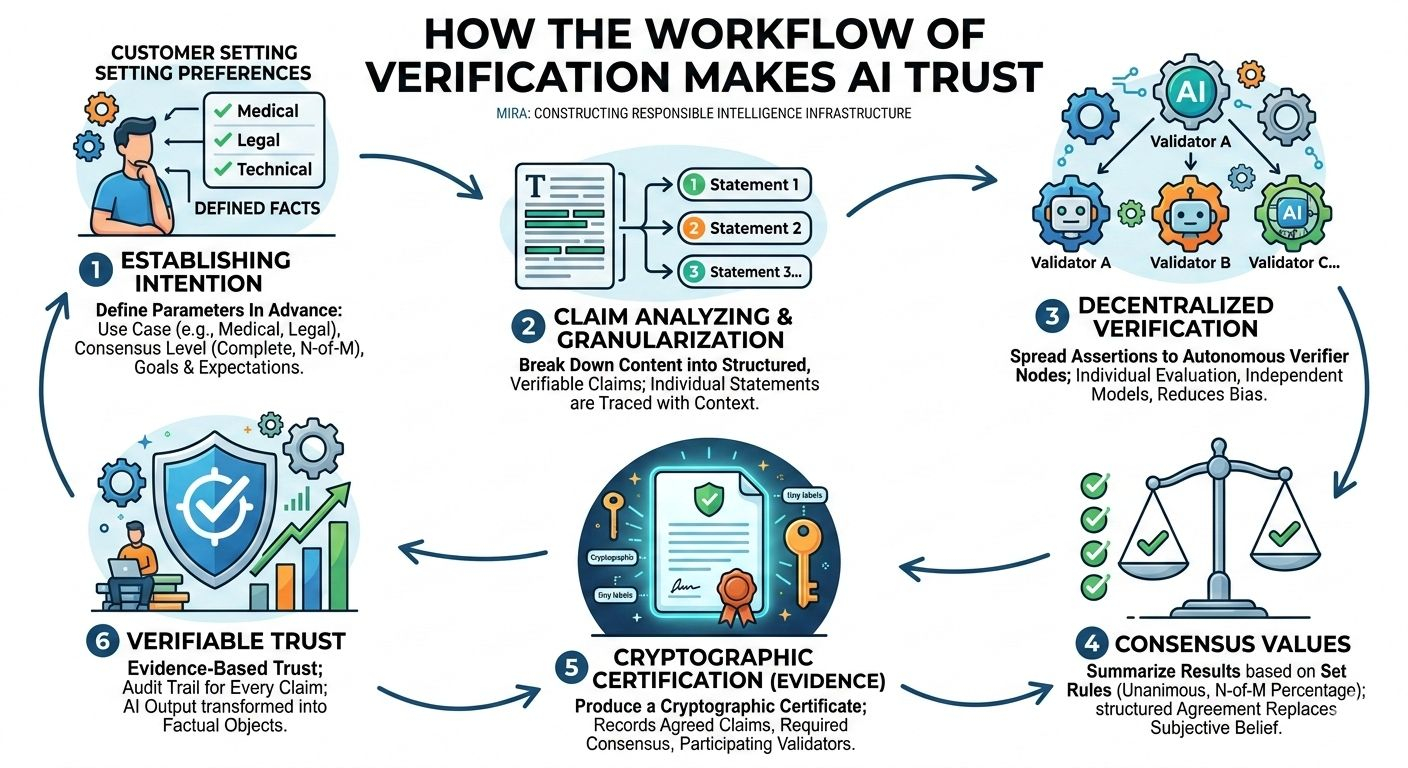

Intention is the starting point of the process. A customer does not just post content and wait that something is checked as a matter of fact. They establish facts and anticipations in advance. Is the material medical? Legal? Technical? How much agreement is needed complete or an N-of-M number? This is important than it appears. The system achieves reliability-use case correspondence by establishing verification parameters during start-up. A casual blog post does not require such a level of verification as compliance documentation or risk modeling. The workflow conforms to the same.

After posting, the material is not not considered in the form of a solid block. Rather, it is broken down to verifiable claims that are structured. This is a very important difference. Checking a complete paragraph is ineffective and obscure. It is granularized by breaking it down into logical statements. Every statement can be traced. Relationships among claims are maintained so that context is not lost whilst being able to independently evaluate it. This makes verification more of a superficial check rather than an audit in line.

The second step brings about decentralization. These assertions are spread to autonomous verifier nodes. Several models evaluate the claims individually and not based on each other. This autonomy is very important. It minimizes bias and does not allow the dominance of a particular model. Every validator looks into the statement and identifies it as factual or logical to the stipulated domain circumstances.

Evaluation results are then summarized based on the desired level of consensus. Reliability can be measured at this point. Assuming that the requirement is unanimous agreement, all the validators are to agree. In case the need is N-of-M, there has to be a set percentage which must concur before the claim is taken. The given mechanism displaces subjective belief with the structured agreement. It is not what model sounds most sure it is the number of independent systems that all tend to the same conclusion.

The last process is possibly the mightiest one, certification. The workflow does not just reply with a “verified label but the cryptographic certificate is produced. This is a certificate that records the claims that were agreed upon, the amount required to be reached, and the validators involved. That is, it generates evidence. The customer does not simply get a result, he is shown evidence that the result went through an open process of verification.

It is monumental that difference. The majority of the currently existing AI systems do not have audit trails. When something does go wrong it would be hard to trace the responsibility. A workflow of verification is a workflow that documents all of the steps. Every claim has a status. All the agreement levels are recorded. This converts an isolated output of AI to a factual object.

What this demonstrates is that Mira is not simply constructing more intelligent retorts. It is constructing intelligence infrastructure that is responsible. It puts in place a systematic gateway between creation and credibility. Rather than requesting the users to trust the brand name or the size of the model, it offers cryptographic and consensus-based guarantees.

With AI systems working in more highly stakes scenarios (capital management, medical decision-making, code execution or autonomous agents) this tier of structured verification becomes necessary. The greater the influence of decisions that are made by AI, the more robust auditable trust mechanisms are required.

We commonly address AI scaling according to its performance and capability. Scalability needs reliability though. It demands systems which can demonstrate the validity of their products. The verification process of Mira shows what such future would look like: set goals, claim analyzing, distributed verifying, consensus values, verification certification.

AI does not gain credibility by talking like a smart person. It is credible when the products of its productions can be subjected to verification.

And it is the change we are now starting to witness not to answers, but to evidence, not to confidence, but to consensus, not to output but to verifiable trust.

@Mira - Trust Layer of AI #Mira $MIRA