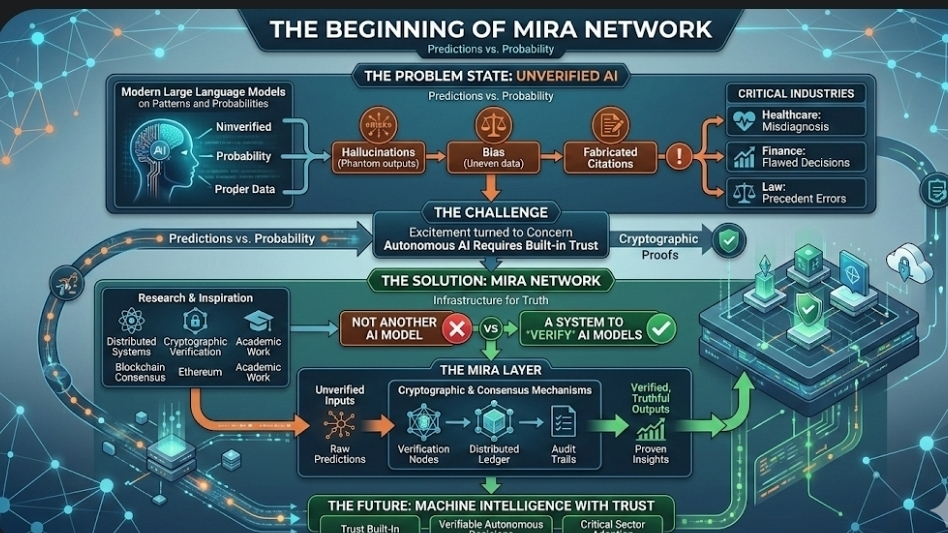

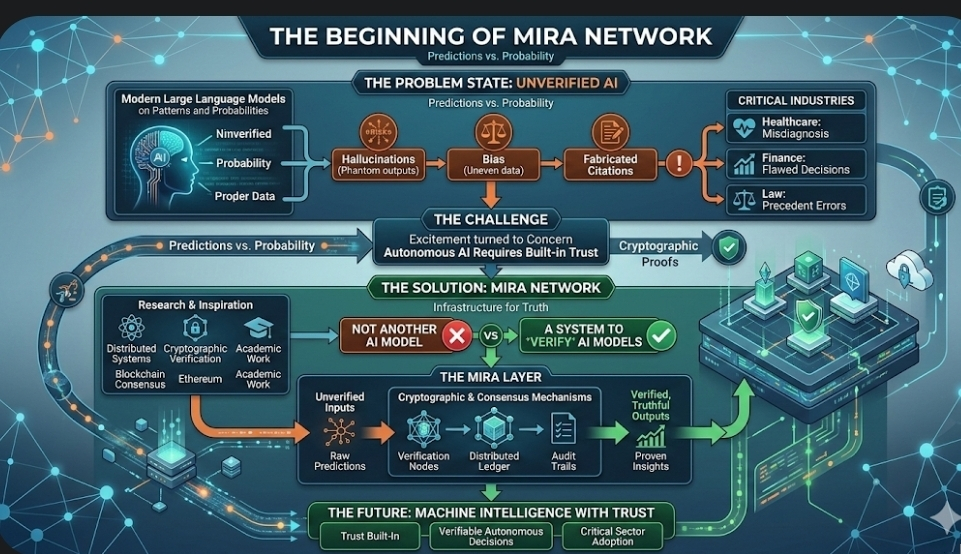

There was a moment in the evolution of artificial intelligence when excitement quietly turned into concern. AI systems were writing essays, diagnosing diseases, generating code, even making financial suggestions. They sounded confident. They looked intelligent. But sometimes, they were wrong. Not slightly wrong. Completely wrong. I’m talking about hallucinations, fabricated citations, biased outputs, and confident misinformation. The deeper AI entered critical industries like healthcare, finance, and law, the more dangerous those errors became.

That’s where Mira Network begins.

The creators saw something many of us felt but couldn’t fix. If AI becomes autonomous, if it starts making decisions without constant human supervision, trust cannot be optional. It has to be built into the system itself. They’re not trying to build another AI model. They’re building a way to verify AI models. That difference changes everything.

Mira was born from research across distributed systems, cryptographic verification, and blockchain consensus mechanisms. The team drew inspiration from decentralized networks like Ethereum and academic work in verifiable computing. We’re seeing a shift where infrastructure matters more than hype. Mira is infrastructure for truth in machine intelligence.

Why AI Needed a New Layer of Trust

Modern large language models are powerful because they predict patterns in massive datasets. But prediction is not understanding. It’s probability. When a model generates an answer, it doesn’t “know” it’s true. It calculates likelihood. If that likelihood is based on flawed data or misinterpreted context, the result becomes misinformation dressed as certainty.

If AI becomes embedded in autonomous vehicles, medical diagnosis systems, legal analysis platforms, or financial trading bots, small errors become catastrophic ones. The Mira team understood that relying on centralized verification like one company saying “trust our model” was not enough.

They asked a deeper question. What if verification itself could be decentralized? What if AI outputs could be treated like transactions on a blockchain, validated through consensus instead of authority?

That idea became Mira Network.

How the System Actually Works

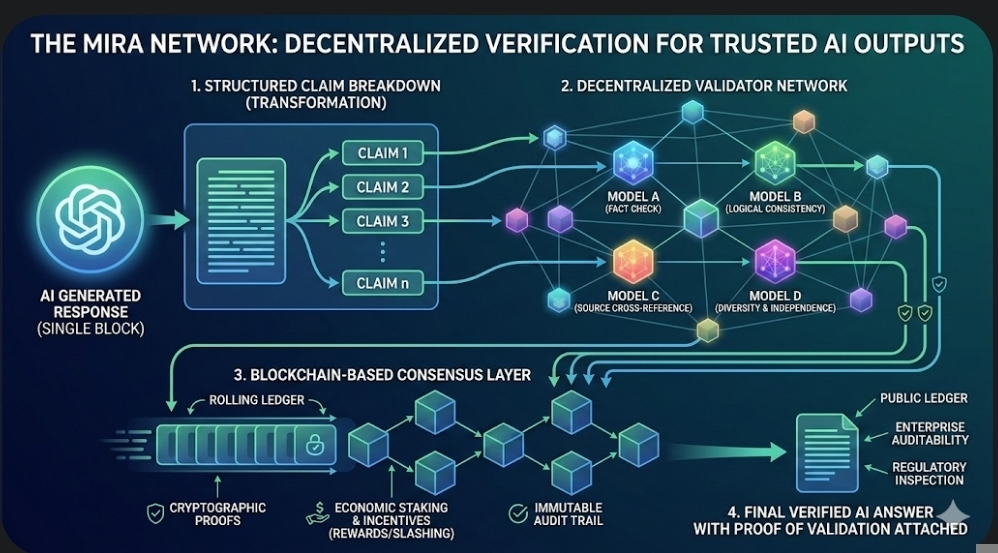

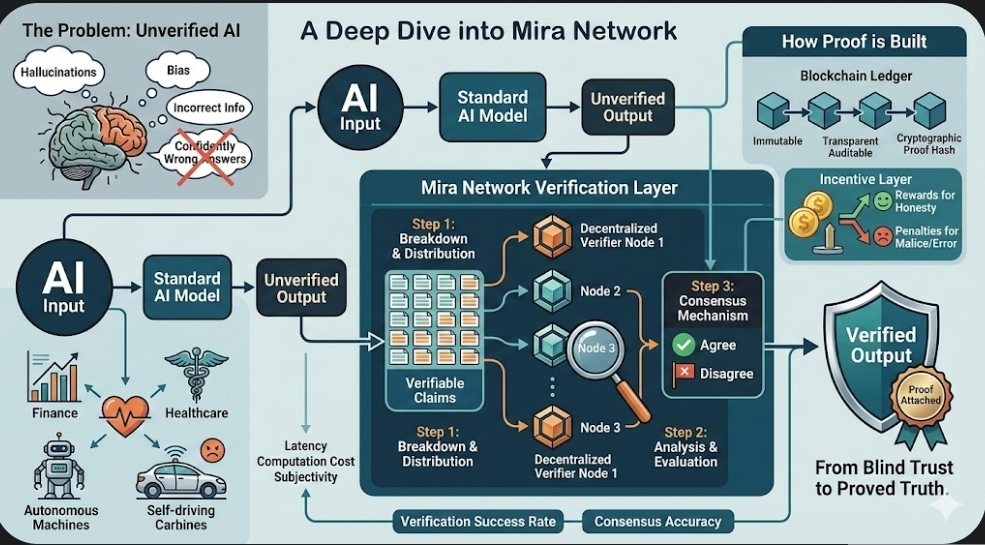

At its core, Mira transforms AI outputs into verifiable claims.

When an AI model generates a response, Mira does not accept the full paragraph or analysis as a single block of information. Instead, it breaks the output into smaller, structured claims. Each claim becomes a unit that can be tested, checked, or challenged.

These claims are distributed across a decentralized network of independent AI validators. They’re separate models, sometimes built by different providers, trained differently, optimized differently. This diversity is intentional. If all validators were identical, systemic bias would remain.

Each validator independently evaluates the claim. Some check factual accuracy. Some cross reference data sources. Some evaluate logical consistency. The results are then submitted into a blockchain based consensus layer.

Through cryptographic proofs and economic staking mechanisms, validators are incentivized to provide accurate assessments. If a validator behaves dishonestly or inaccurately, it risks losing staked tokens. If it behaves correctly, it earns rewards.

This creates what’s known in distributed systems as a trustless environment. No single entity controls the truth. Consensus emerges from aligned incentives and cryptographic guarantees.

The final output becomes not just an AI answer, but a verified AI answer with proof of validation attached.

Why the Creators Made These Design Choices

The decision to use blockchain was not about hype. It was about immutability and incentive alignment.

Blockchain provides a public ledger. Once verification results are recorded, they cannot be altered without consensus. This ensures auditability. Enterprises can trace how a decision was verified. Regulators can inspect the process. Transparency becomes part of the architecture.

The use of economic staking is equally critical. In decentralized networks, incentives drive behavior. If validators have something to lose, they behave responsibly. They’re not just running models for fun. They’re participating in a system where accuracy has measurable value.

The modular design of Mira also matters. The verification layer is separate from the AI generation layer. This means companies can plug their existing AI systems into Mira without rebuilding everything from scratch. Interoperability increases adoption.

The team understood something simple but powerful. Trust scales when it is programmable.

Metrics That Show Success

Success for Mira is not measured by flashy user interfaces or token price alone. It’s measured in reliability.

One metric is verification accuracy rate. How often does consensus correctly identify flawed or hallucinated content? Over time, as validator diversity increases, error detection improves.

Another metric is validator participation. The more independent models contributing to consensus, the stronger the network becomes. Decentralization depth is measurable.

Enterprise adoption is another signal. If financial institutions, healthcare providers, or AI startups integrate Mira’s verification layer, that indicates real world demand.

Transaction throughput and latency also matter. Verification must be fast enough for real time applications. If It becomes too slow, it limits usability.

Finally, economic sustainability is key. Token incentives must balance validator rewards with network growth. A healthy token economy supports long term participation.

Risks and Challenges

No system is perfect.

One risk is validator collusion. If a group of validators coordinate dishonestly, they could manipulate consensus. Mira addresses this through economic penalties and diversity requirements, but risk remains.

Another challenge is computational cost. Running multiple AI validators for every output requires resources. Efficiency improvements are essential for scalability.

Regulatory uncertainty is also a factor. As governments define AI governance frameworks, decentralized verification systems may face compliance questions.

There is also the philosophical risk. Verification does not guarantee absolute truth. It improves reliability. It reduces probability of error. But truth in complex domains can be subjective.

The creators are aware of this. They’re not promising perfection. They’re promising measurable improvement.

The Vision for the Future

Mira Network’s long term vision is ambitious.

They imagine a world where autonomous AI agents can transact, negotiate, and make decisions independently, but only after their outputs are verified through decentralized consensus. We’re seeing early signs of agent based economies forming. If those agents operate without trust infrastructure, systemic risk increases.

Mira wants to become the verification backbone for that future.

In healthcare, AI diagnostics could include cryptographic proof of validation before reaching doctors. In finance, algorithmic trading decisions could carry verification stamps. In governance, policy simulations generated by AI could be publicly auditable.

If It becomes standard practice that AI outputs must pass decentralized verification before deployment, Mira’s architecture could become foundational infrastructure.

The team also envisions expanding beyond text verification into multimodal systems. Images, video, and sensor data could be validated through similar consensus frameworks.

It’s not just about fixing hallucinations. It’s about redefining accountability in machine intelligence.

A Human Reflection

When I think about Mira Network, I don’t just see code and cryptography. I see a response to a quiet fear many of us share. AI is powerful. Sometimes too powerful. They’re evolving faster than our systems of trust.

Mira is an attempt to slow down and build carefully.

Trust cannot be forced. It must be earned. And in decentralized systems, it must be engineered.

If the future belongs to intelligent machines, then verification must evolve alongside them. Mira Network is betting that consensus, incentives, and transparency can create a safer path forward.

Maybe that’s what progress really looks like. Not louder models. Not bigger hype. But stronger foundations.

And if we build those foundations correctly, We’re seeing the possibility of a world where AI doesn’t just sound smart.

It becomes reliable.

That changes everything.