I started because I’ve lost the habit of trusting AI outputs by default.

Not in the abstract “AI is dangerous” way. In the operational sense. I’ve watched models hallucinate figures that look plausible, fabricate citations that pass casual review, and answer with confidence where uncertainty should exist. As systems become more autonomous, those failures stop being cosmetic. They become systemic risk.

That’s where Mira reframes the problem.

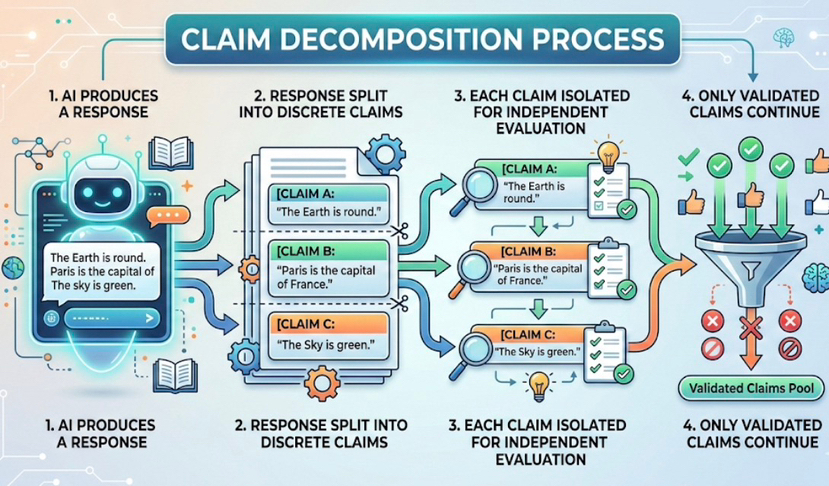

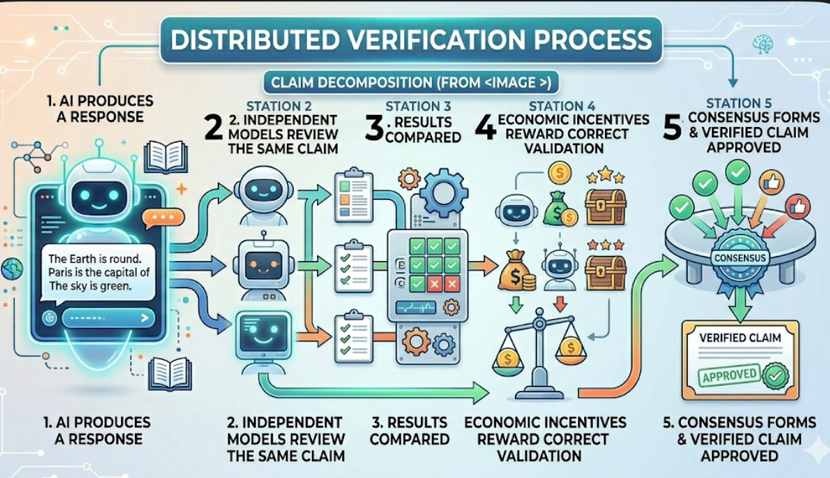

Instead of treating an AI response as a single object, Mira decomposes it into smaller claims. Each claim is evaluated independently across a network of models. What survives isn’t authority or scale, but convergence driven by economic incentives.

This feels less like generation and more like audit.

We’ve normalized AI as a black box. It outputs text, we decide whether to believe it. Mira shifts that posture. Outputs are treated as assertions that must earn legitimacy. The unit of trust isn’t the model. It’s the claim.

I ran a mental stress test.

Imagine an AI summarizing market data or regulatory language. Normally, a single hallucinated number can distort the entire conclusion. Under Mira’s model, numerical claims are cross-checked by independent agents. Agreement isn’t social. It’s incentivized. Nodes are rewarded for accuracy, not confidence.

What stood out to me is that Mira doesn’t promise smarter AI.

It promises accountable AI. Larger models still hallucinate. Better training still misfires. Verification introduces discipline that intelligence alone doesn’t solve.

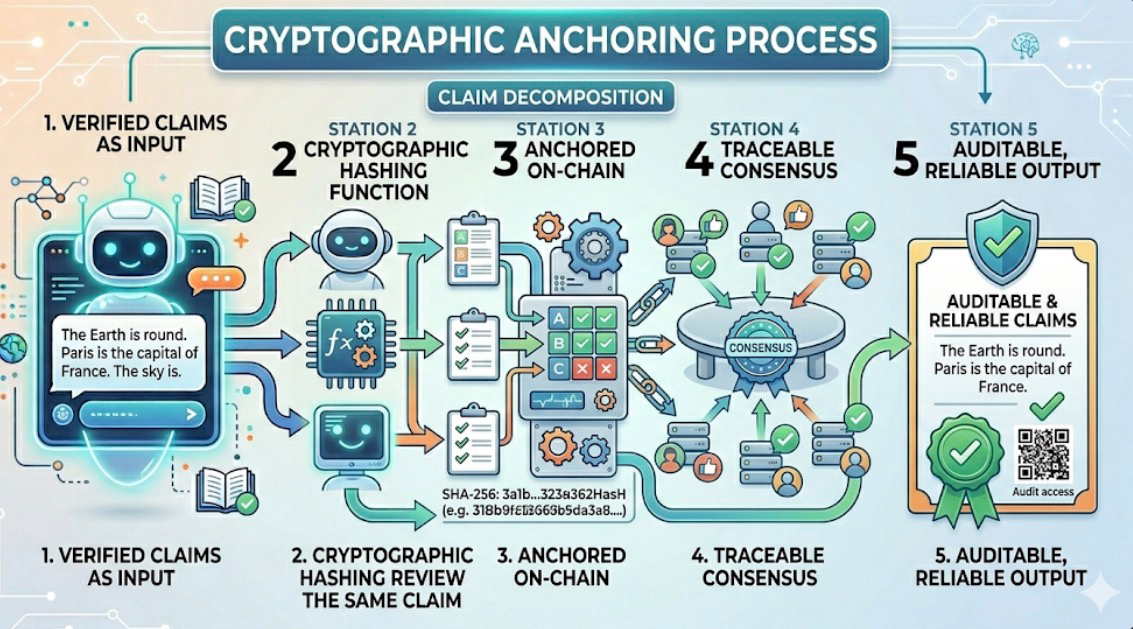

And the blockchain layer isn’t cosmetic.

Validated claims are cryptographically anchored, leaving a visible trail of consensus. You’re not asked to trust that something was checked. You can verify that it was.

There are trade-offs. Verification introduces latency. Costs exist. Speed competes with certainty. But in high-stakes environments, that friction may be the feature, not the flaw.

Trust isn’t assumed here.

It’s constructed.

@Mira - Trust Layer of AI | $MIRA | #Mira