We Built Courts to Verify Humans. Nobody Built That for AI.

A deep dive into Mira Network and why the problem it's solving is older than crypto

@Mira - Trust Layer of AI isn't trying to make AI smarter. It's building the accountability layer that civilization forgot to install before it handed AI the keys to healthcare, finance, and legal decisions.

Here's something nobody in the #Mira threads is saying out loud: the problem Mira is solving is as old as human civilization. We just never had to solve it at this speed before.

Think about every institution humans ever built to handle trust. Courts. Notary offices. Medical licensing boards. Journalism fact-checkers. Financial auditors. The entire SEC. Every single one of them exists for one reason — because when someone makes a claim that affects your life, you need a system, not a person, that can verify it.

Now fast-forward to today. AI is writing legal contracts, diagnosing symptoms, generating financial summaries, drafting code that runs real infrastructure. It's making claims. Billions of them, every day. And the verification layer? It doesn't exist. We just believed it. Or hoped it was right.

That's the gap Mira is building into.

The Hallucination Economy Is Already Here

Most writers will tell you Mira solves "AI hallucinations." That's accurate but it undersells the actual stakes.

A hallucination in casual AI usage means a chatbot tells you the wrong year a movie came out. Annoying. Whatever.

But the same fundamental unreliability is now inside applications where being wrong doesn't mean mild embarrassment. It means a denied insurance claim based on a faulty medical summary. A legal brief with fabricated case citations. A trading algorithm acting on a hallucinated earnings report. These aren't hypotheticals. They've happened. They are continuing to happen.

The problem was never that AI was wrong. The problem is that nothing in the current stack tells you when it's wrong — until after the damage is done.

Every current solution is human-dependent. You hire a human to review AI outputs. You build review pipelines. You add disclaimers. But human review doesn't scale. AI output does. You cannot hire your way out of this problem. That's the structural crack in the foundation.

What Mira Actually Does (In Plain Language)

The official description is "consensus-based verification." Here's what that actually means.

When an AI system makes factual claims, Mira routes them to multiple independent AI models simultaneously. These models have no communication with each other. They each evaluate separately. Then the network looks for agreement.

If multiple independent models agree: the claim passes, and a cryptographic certificate is issued. On-chain. Auditable. Permanent. Proof that this output was verified — checkable tomorrow, next year, in a legal proceeding.

If the models disagree: the claim is flagged. No certificate. The downstream system knows this output is unreliableand can respond accordingly — whether that means escalating to human review or rejecting the output entirely.

Node operators running these verification models are economically incentivized to be honest. Stake MIRA to participate. Be wrong consistently and your stake gets slashed. Truth-telling is the profitable strategy. That's not just a technical architecture — it's an economic one.

The Part Everyone Glosses Over: Who Actually Needs This

Most Mira content talks about "the AI space" like it's abstract. Let me be specific about who the real customer is — because it's not who you think.

It's the enterprise deploying AI.

Right now, any serious company integrating AI into a regulated workflow has a compliance problem. Their lawyers are asking: "If this AI output is wrong and causes harm, what's our liability?" The answer under current infrastructure is: "You're exposed." No audit trail. No verification certificate. No on-chain proof that your system did its due diligence.

Mira's Verify API is the answer to that legal exposure. It doesn't just make AI more accurate. It makes AI defensible in court. That's a different product category entirely — and one that CFOs and General Counsels understand immediately in a way that "96% accuracy" never quite lands.

Mira isn't selling accuracy. It's selling accountability. Those are two completely different things, and only one of them gets budget approval in Q1.

The Uncomfortable Truth About the Token

I'm going to say something most contributors won't: the quality of the underlying project and the short-term price of the token are separate conversations, and confusing them is how people get hurt.

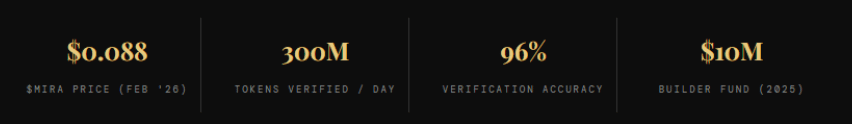

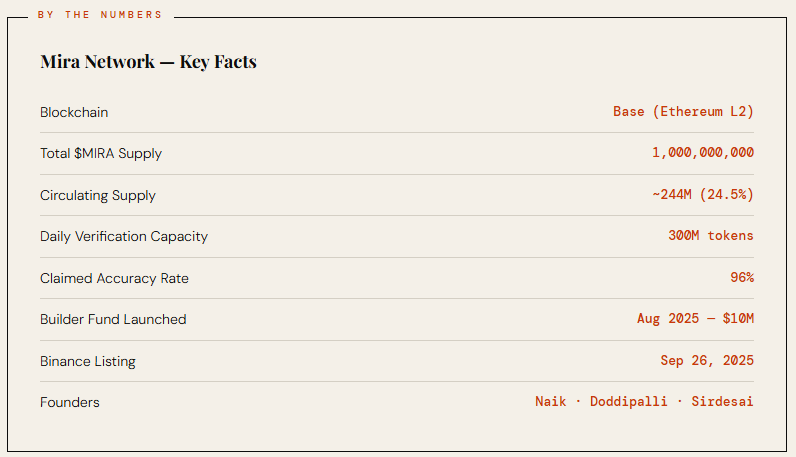

The facts: $MIRA listed on Binance on September 26, 2025, hit an all-time high of $ 2.35 on launch day, and has since settled around $ 0.088. Roughly 96% off that peak. This is not unique to Mira — it's the story of nearly every 2025 token launch. Early participants, airdrop recipients, and ecosystem holders all had tokens and most needed liquidity. What happened post-launch wasn't a referendum on the technology. It was a supply-demand event.

The tokenomics matter going forward. Early investors and contributors have tokens that haven't fully hit the market yet. Unlock events in year two and beyond are the real variable to watch — not what happened in the first 90 days.

The MIRA token has genuine utility. Validators stake it. Developers pay with it to access the Verify API. It's governance. It's the economic backbone of the network. Whether those real use cases translate to the price you want at the time you want it — that's a question nobody should be answering for you.

What to Actually Watch

Forget the daily candle. Here's what actually signals whether this project is working:

Developer adoption. The mainnet and SDK launched September 2025. Are developers actually building on it? Look for live applications integrating Mira Verify — not just partnership announcements.

Partnership depth. Mira has relationships with Kaito and Irys. Watch whether these become deep technical integrations or stay cosmetic. The combination of Kaito's data layer and Mira's verification is genuinely interesting — but only if builders are using both.

Validator growth. The network's honesty scales with how many validators are staking honestly. This data is on-chain. You can check it yourself.

The Civilization Argument, Revisited

Every verification institution humans ever built took decades to become normalized infrastructure. Nobody thinks twice about courts and auditing firms now. They're just how things work.

We are at the very beginning of what the AI verification layer looks like. The tooling is nascent. The business model is still being shaped. The regulatory picture is unpredictable. Mira is one serious attempt at a real, structural problem — with live mainnet, real developer tooling, and a cryptoeconomic model that aligns incentives correctly, at least in theory.

Whether it becomes the standard, one of several competing standards, or gets outpaced by something better: genuinely unknown. Hold that uncertainty honestly alongside whatever conviction you have.

What isn't uncertain — the problem Mira is solving is real, it's getting bigger every quarter, and right now there is almost nothing else in production trying to solve it this way.

That's worth paying attention to. What you do with that information is yours to figure out.