Una volta che una nuova rete viene lanciata nel mercato crypto o AI, l'allerta tende a suonare. Ha conti alla rovescia, streaming dal vivo e un'inondazione di annunci che la rivoluzione sta arrivando. Tuttavia, il rilascio di Mira mainnet il 26 settembre 2025 è stato diverso. La dichiarazione era straordinariamente non violenta. Non c'era alcun drammatico clamore, era solo un semplice pezzo di informazione: il layer di fiducia dell'AI era aperto.

Già le cifre erano forti all'inizio dell'anno. Oltre 7 milioni di query erano state elaborate sulla rete nella fase di testnet. Le applicazioni sviluppate nell'ecosistema avevano già raggiunto circa 4,5 milioni di utenti. Per aggiungere a ciò, l'infrastruttura stava elaborando oltre 3 miliardi di token di contenuti generati dall'AI ogni giorno. Non era più un esperimento di alcun tipo. L'impressione era che fosse un sistema che era stato ben preparato per essere realmente utilizzato.

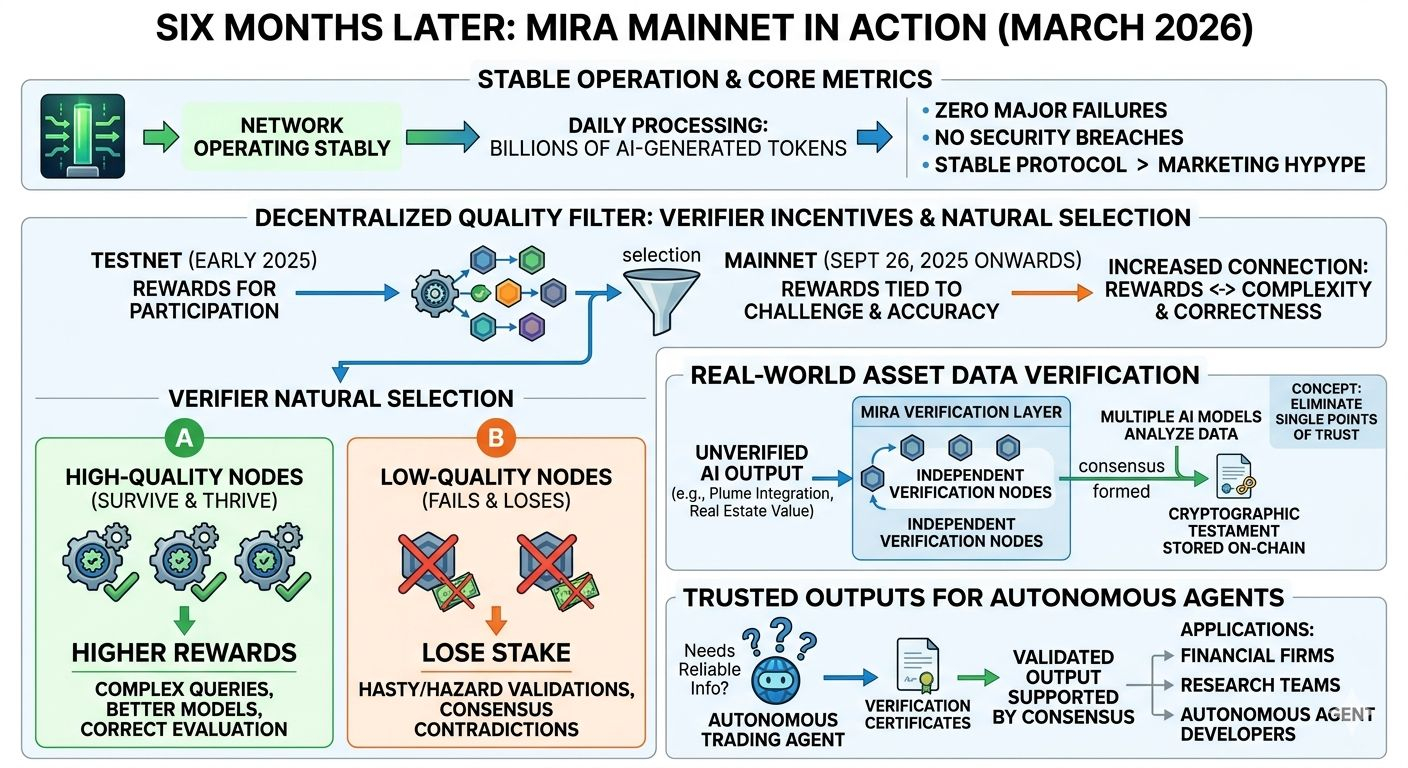

Sei mesi dopo, nel marzo 2026, non è il lancio stesso che è interessante. È ciò che è avvenuto dopo il lancio.

La mainnet ha funzionato senza gravi guasti o violazioni della sicurezza. In un ambiente in cui i nuovi protocolli possono avere problemi con bug o exploit nelle loro fasi iniziali, una posizione stabile è più importante del marketing. Miliardi di token vengono ancora elaborati dalla rete ogni giorno e i nodi di verifica continuano a eseguire i loro controlli attraverso gli output AI.

Una verifica dei dati degli asset del mondo reale è una delle applicazioni più efficienti. Con integrazioni, come il Plume, è possibile avere asset tokenizzati, come valori immobiliari o metriche di credito, controllati da vari modelli di AI e nodi di verifica. Più sistemi indipendenti analizzano i dati piuttosto che basarsi sull'output di un solo modello. Non appena un numero sufficiente di essi concorda, un testamento crittografico dello stesso viene memorizzato on-chain.

Questa procedura può essere tecnica, ma il concetto non è complicato: eliminare i punti singoli di fiducia.

In precedenza, quando un sistema di intelligenza artificiale forniva una valutazione o una previsione, gli utenti erano tenuti a credere al modello che la produceva. Quando c'è uno strato di verifica, l'output è molto più simile a una dichiarazione revisionata. Inoltre, ci sono diversi validatori che verificano le affermazioni prima dell'accettazione delle informazioni.

L'altra modifica evidente dopo il rilascio della mainnet sono gli incentivi per i verificatori. Nel primo periodo di testnet, la maggior parte dei partecipanti è stata compensata principalmente per la partecipazione alla rete. Questo metodo ha aiutato a sviluppare il primo ecosistema, ma non era un metodo di filtraggio della qualità molto forte.

Con l'introduzione della mainnet, l'economia è cambiata. Ciò ha aumentato la connessione tra ricompense e la sfida e l'accuratezza delle query che venivano controllate. Compiti complessi che devono essere analizzati più approfonditamente ora producono ricompense maggiori. Allo stesso tempo, una validazione errata può comportare la riduzione delle penalità.

Il risultato è un processo di selezione naturale all'interno della rete.

I nodi che effettuano semplicemente convalide frettolose o disordinate non durano a lungo. Possono perdere stake nel caso in cui le loro risposte contraddicono costantemente il consenso della rete. Nel frattempo, tutti i nodi che investono tempo e utilizzano modelli migliori e metodi di valutazione più corretti riceveranno ricompense più elevate per gestire query difficili.

Praticamente, corrisponde a qualcosa di interessante, un filtro di qualità decentralizzato.

Maggiore è la complessità della domanda, migliore è la verifica. Per fare un esempio, è abbastanza diverso controllare una conversazione in una chat informale e verificare le informazioni di natura finanziaria da utilizzare nei sistemi di trading automatico. La domanda di output affidabili aumenta rapidamente man mano che gli agenti AI entrano nel mondo dei mercati finanziari e iniziano a lavorare attivamente.

Considera un agente di auto-trading che gestisce importi reali di denaro. Nel caso in cui il sistema venga alimentato con un indirizzo di contratto errato o informazioni false su un pool di liquidità, i risultati potrebbero essere disastrosi. Gli strati di verifica mitigano quel rischio assicurandosi che le informazioni utilizzate siano passate attraverso più validatori prima di essere utilizzate.

Questo è il momento in cui i certificati di verifica che Mira ha iniziano a diventare importanti. Una volta che la rete conferma un pezzo di informazione generata dall'AI, il risultato riceve un record che indica che c'erano molti validatori concordi sul risultato. Tale evidenza può poi essere applicata in applicazioni che richiedono maggiore affidabilità.

I creatori di agenti autonomi potrebbero non fidarsi di un singolo output AI da parte di aziende finanziarie, team di ricerca e sviluppatori di agenti autonomi. Tuttavia, possono avere fiducia in un output convalidato supportato dal consenso e che è memorizzato on-chain.

L'altro aspetto curioso dell'ecosistema è lo sviluppo dell'economia dei token dopo il lancio. L'utilità del $MIRA token è diventata più evidente quando la rete era completamente operativa. I nodi di verifica utilizzano i token per far parte del processo di validazione. Le query verificate vengono addebitate allo stesso token dalle applicazioni. E i detentori di token potranno essere coinvolti nelle decisioni di governance man mano che la rete si sviluppa.

Questo design collega la salute della rete con il valore di una corretta verifica. La domanda di validazione aumenta man mano che il numero di applicazioni in cerca di output verificati cresce, e il compito del sistema di staking, che la sostiene, aumenta di conseguenza.

Il team che si occupa dell'infrastruttura tecnica ha esperienza nello sviluppo di AI e blockchain. Ci sono leader come Ninad Naik, la cui esperienza in sistemi AI su larga scala ha contribuito alla progettazione della rete. L'accento è stato posto sull'istituzione di una base solida prima che l'azienda si affrettasse verso una crescita accelerata.

Quest'ultimo spiega perché il lancio della mainnet non è stato drammatico. La rete è stata testata anche prima di essere attivata a pieno regime. Al momento del lancio pubblico, gran parte dell'infrastruttura era stata già utilizzata in pratica.

Tuttavia, 6 mesi dopo, la storia reale non include l'annuncio fatto alla fine di settembre. È l'azione quotidiana che continua. Miliardi di token sono stati gestiti. Processi di verifica dei nodi di verifica. Applicazione che richiede risultati autentici per gli utenti.

Il movimento silenzioso può essere anche la migliore indicazione.

Mira non sta cercando di sostituire i modelli di AI ma si sta concentrando su qualcosa di più pratico, ovvero convalidare le informazioni che tali modelli generano. Con l'ulteriore implementazione dell'AI nel settore finanziario, automazione e decision-making, tale strato di verifica potrebbe essere considerato significativo quanto i modelli stessi.

L'introduzione della mainnet non era il traguardo finale. Era il momento in cui il sistema ha cominciato a operare nella realtà. E con lo stesso ritmo, il ruolo della verifica nell'infrastruttura AI probabilmente diventerà molto più evidente negli anni a venire.

@Mira - Trust Layer of AI #Mira $MIRA