Artificial intelligence is moving through a strange phase of progress. The capabilities of modern models have reached levels that would have seemed impossible just a few years ago. Systems can now write complex software generate research summaries analyze markets and build strategies in seconds. Speed and efficiency are no longer the bottleneck.

Yet the deeper AI becomes integrated into real world workflows the more one issue continues to surface reliability.

Many users have experienced the same situation. An AI produces a confident explanation the reasoning looks structured and the tone sounds authoritative. But when the information is checked carefully some parts turn out to be inaccurate or completely fabricated. These hallucinations are not always obvious and that is what makes them dangerous.

When AI is used for casual tasks these errors are minor inconveniences. But when the same systems are used for financial decisions research development or automation a single mistake can propagate quickly through entire systems.

This is where the concept of verification begins to matter. Instead of simply asking machines to produce answers the next stage of AI infrastructure is focused on proving that those answers are valid. One project exploring this direction is Mira Network which introduces a verification framework designed to evaluate AI outputs before they are trusted.

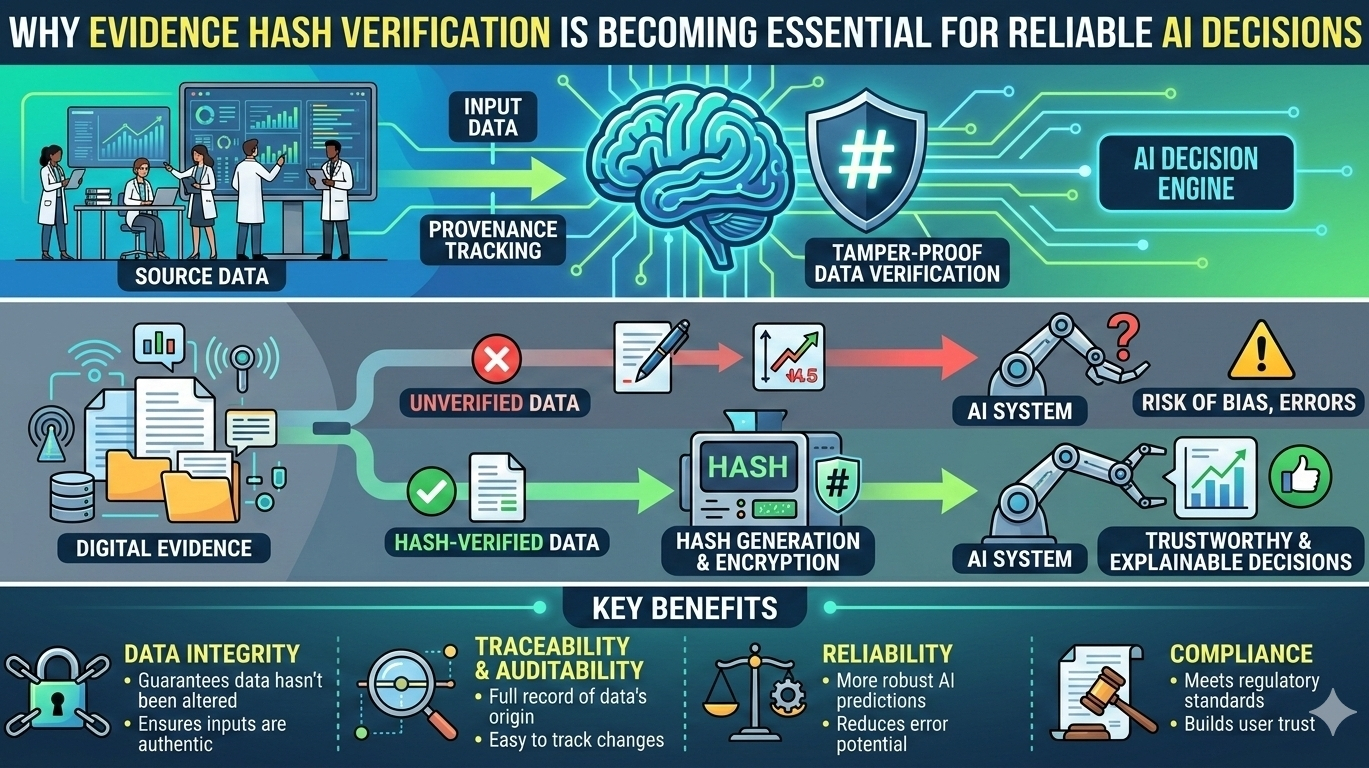

At the center of this system is something called an evidence hash which acts as a digital receipt showing that a machine generated decision has passed through an independent verification process.

The challenge of trusting machine intelligence

Modern AI systems are built on probability. They generate responses based on patterns learned from massive datasets rather than through strict logical reasoning. This allows them to be extremely flexible and creative but it also introduces uncertainty.

Because of this design models can sometimes produce information that sounds convincing even when it is incorrect. The result is a system that appears highly intelligent but still requires careful scrutiny from human users.

In the early days of AI this was manageable because humans were always closely involved in reviewing the outputs. Today the situation is different. AI systems are increasingly being integrated into automated workflows trading algorithms research pipelines and software development environments.

As these systems begin making decisions faster than humans can review them the absence of verification becomes a serious risk.

Instead of simply trusting the machine there needs to be a process that confirms whether the reasoning behind an output is sound. That is the problem Mira Network attempts to address.

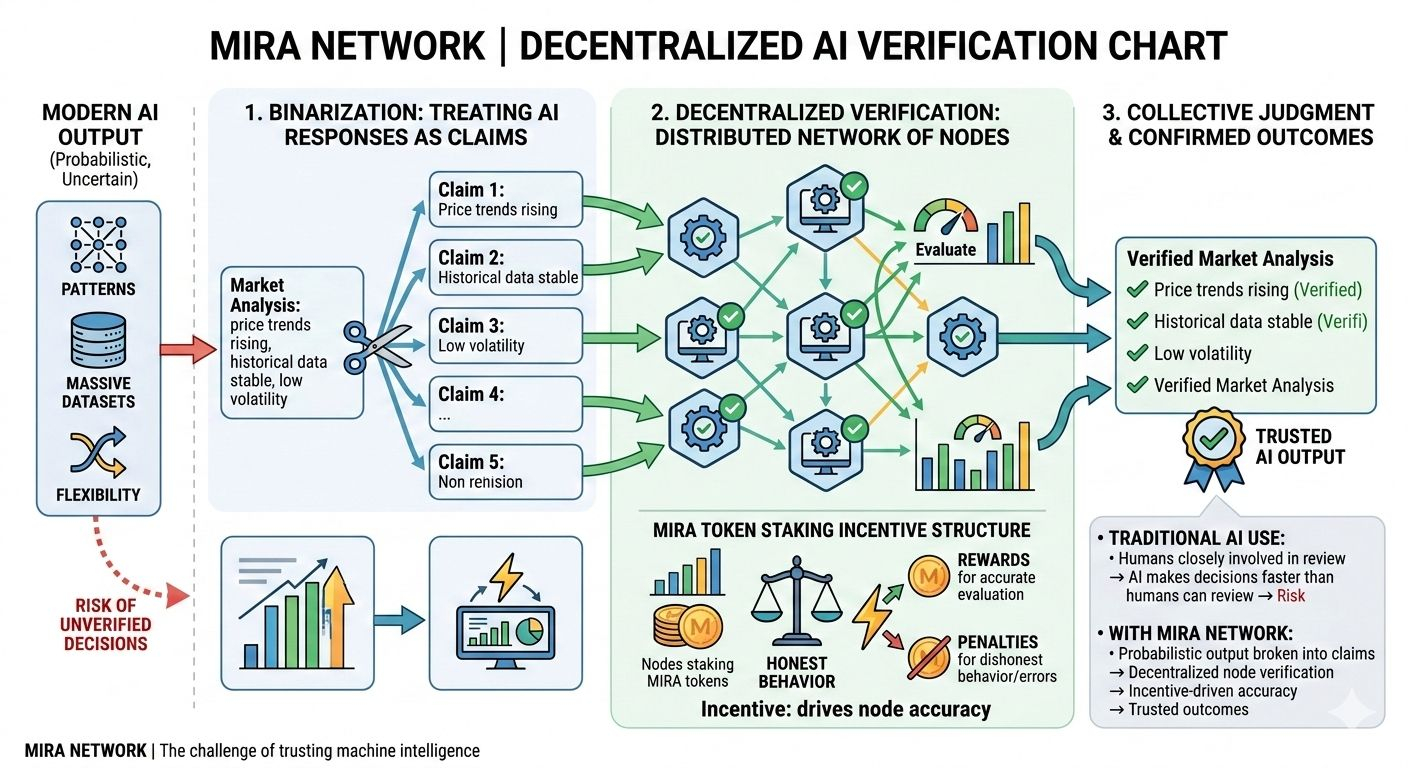

Treating AI responses as claims rather than answers

One of the core ideas behind Mira is that an AI output should not be treated as a final conclusion. Instead it should be interpreted as a set of individual claims.

A single response from a model might contain multiple pieces of information assumptions or logical steps. Rather than accepting them as a single block of truth Mira breaks them into smaller statements that can be evaluated independently.

This process is often described as binarization. A complex response is divided into smaller declarations that can be examined one by one.

For example if an AI produces a market analysis that explanation might include statements about price trends historical data volatility patterns or risk calculations. Each of these statements becomes a claim that the system can verify.

Breaking the response into these smaller components allows the network to check each part individually instead of relying on the overall confidence of the model.

The role of decentralized verification

Once the AI response has been divided into smaller claims the verification process begins. This stage is handled by a distributed network of nodes that evaluate the accuracy of those claims.

Participants in this network stake the native token of the system MIRA which creates an incentive structure tied to honest behavior. By committing capital to the process validators signal that they are willing to take responsibility for the correctness of their evaluations.

If validators behave dishonestly or fail to follow the protocol rules their stake can be penalized. This economic structure encourages careful and accurate verification.

The decentralized nature of the network also plays an important role. Instead of a single organization deciding whether an AI output is correct multiple independent participants contribute to the evaluation.

This distribution reduces the risk of bias or manipulation and ensures that the verification process reflects collective judgment rather than centralized control.

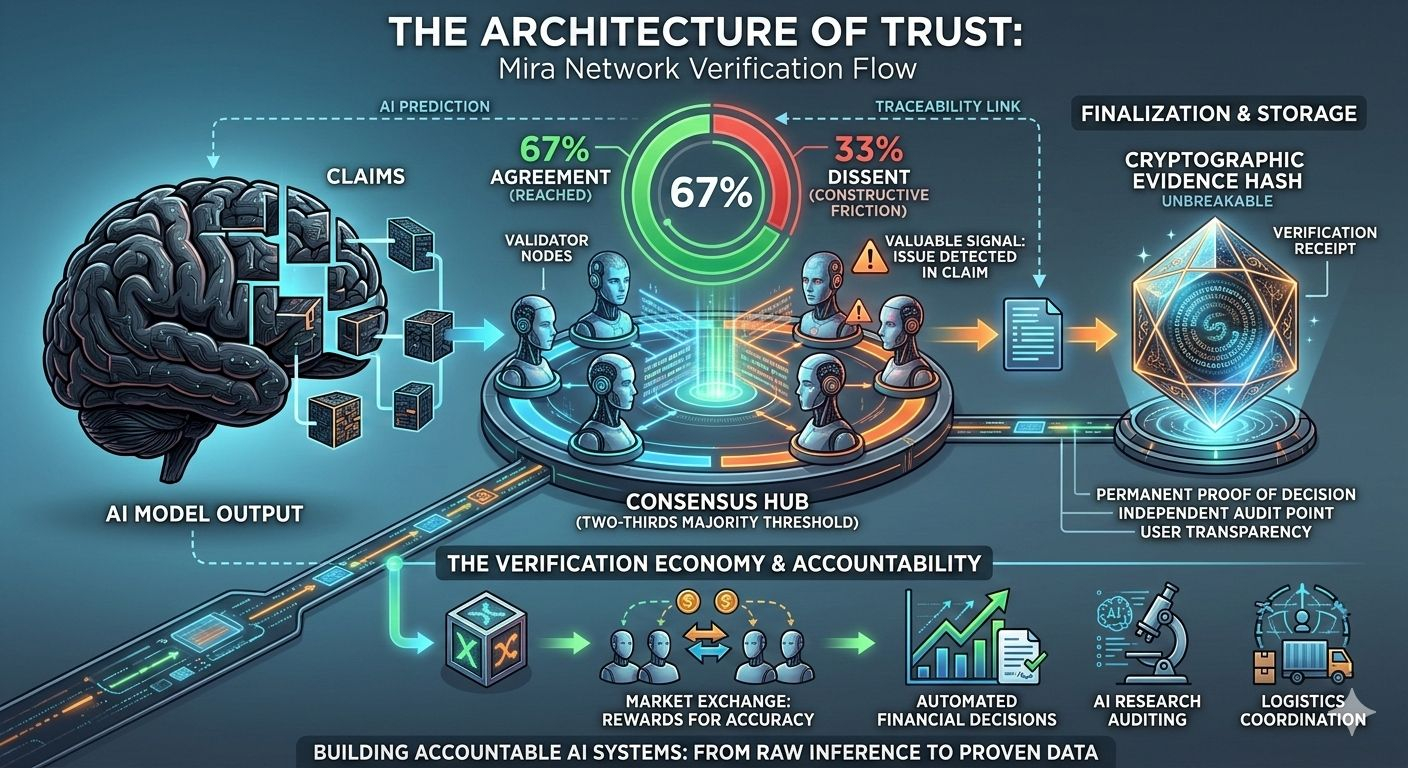

consensus thresholds matter

A key element of the verification system is the requirement for a two thirds majority agreement among validators before a claim is accepted as valid.

In practical terms this means that at least sixty seven percent of the participating nodes must reach the same conclusion before the verification step is finalized.

This threshold creates a balance between efficiency and reliability. If the requirement were too low incorrect claims might pass through the system too easily. If it were too high the process could become too slow to be practical.

By requiring a strong majority rather than simple agreement the network ensures that claims are not accepted unless a clear consensus has formed.

Interestingly situations where consensus fails to form can also provide valuable signals. Disagreement among validators may indicate that an issue exists within the claim itself. In those cases the verification process slows down allowing the network to examine the problem more carefully.

What might look like friction from the outside actually serves as a safeguard against incorrect conclusions.

The function of the evidence hash

After the verification process is complete and the network reaches consensus the system produces what is known as an evidence hash.

An evidence hash is a cryptographic record that proves the verification process occurred. It captures the outcome of the consensus and provides a permanent reference point showing that the AI output was examined and validated by the network.

This record acts like a receipt for the decision. If someone later questions the reliability of the AI output the evidence hash provides proof that the response passed through an independent verification mechanism.

In practical applications this could allow developers organizations and users to trace the origin of a machine decision and confirm that it was reviewed before being used.

Such transparency could become increasingly important as AI systems begin operating with greater autonomy.

The emergence of a verification economy

Beyond the technical mechanisms the architecture of Mira also introduces an interesting economic dynamic.

In most digital systems the accuracy of information is assumed rather than proven. Platforms rely on reputation brand authority or social trust to convince users that their systems are reliable.

Mira introduces a different model in which verification itself becomes an economic activity.

Validators contribute computational effort and analytical work to evaluate claims and they are rewarded for participating honestly in the process. This creates a marketplace where accuracy becomes financially valuable.

Over time such a system could evolve into a broader verification economy where independent participants contribute to the validation of machine generated information across many different sectors.

This model could eventually support applications ranging from AI research validation to financial analysis verification and automated decision auditing.

Expanding applications in the coming years

As AI systems continue evolving their role in digital infrastructure will likely expand. Autonomous agents may manage financial portfolios coordinate logistics networks or execute complex smart contract operations.

When machines are responsible for decisions that affect assets businesses or infrastructure the importance of verification increases dramatically.

The development roadmap of Mira Network suggests a growing ecosystem where verification tools are integrated into real world applications. Integrations such as the Klok application aim to bring verified AI outputs directly into user facing platforms.

Instead of simply receiving an answer from an AI assistant users may eventually receive a response accompanied by proof that the output passed through decentralized verification.

This shift could fundamentally change how people interact with machine intelligence.

A step toward accountable AI systems

Artificial intelligence has reached a level of influence where its outputs can shape financial markets software systems and decision making processes across industries. With that influence comes the need for stronger accountability.

Systems like Mira Network attempt to address this challenge by introducing verification as a foundational layer rather than an afterthought.

By breaking AI responses into claims evaluating them through decentralized consensus and recording the results as evidence hashes the network provides a framework where machine decisions can be independently confirmed.

As AI continues expanding into critical areas of technology and finance mechanisms that provide transparent proof of correctness may become essential infrastructure.

In a digital environment where information moves faster than ever having a reliable method to verify machine generated conclusions could prove just as important as the intelligence that produces them.