I still remember the first time I ran a local model on my laptop.

The fan started screaming. My CPU usage hit 100 percent. And for a few seconds, I felt like I was holding a tiny piece of the future in my hands. Not using someone else’s API. Not sending prompts to a black box in the cloud. Just me, some open weights, and raw compute.

It wasn’t smooth. It wasn’t efficient. But it felt different.

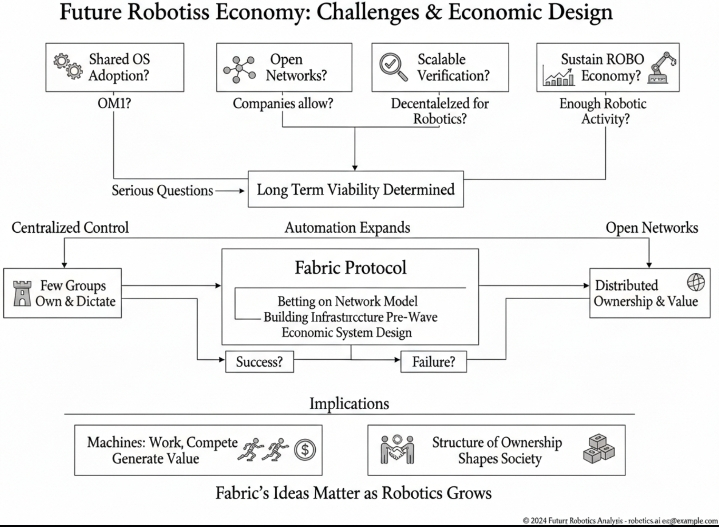

Lately I’ve been thinking about that feeling while watching projects like Fabric Protocol emerge. Because beneath the token charts and roadmap threads, there’s a much bigger tension building in crypto right now. Who actually controls AI production? Not the models themselves. The infrastructure. The data flows. The compute layers. The economic rails.

And whether that control ends up looking anything like crypto promised it would.

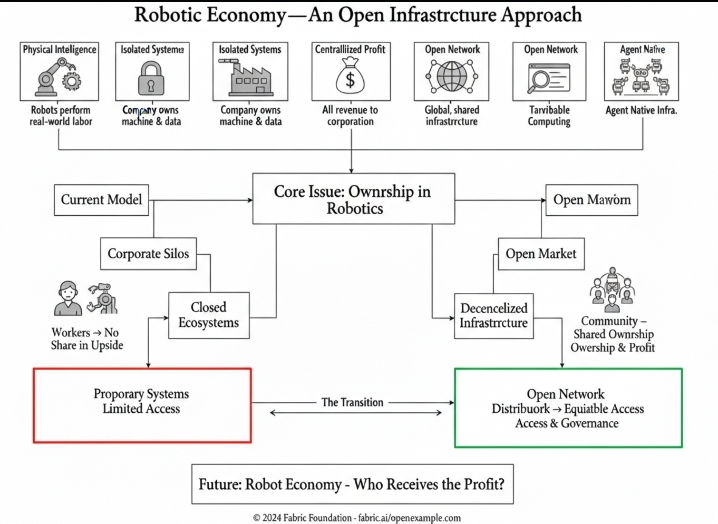

AI today is mostly industrial. Massive data centers. Proprietary datasets. Closed training pipelines. It’s impressive, sure. But it’s also very centralized. A handful of companies decide what gets trained, how it’s deployed, and who can afford access. Even when we talk about “open source,” the underlying compute power often isn’t.

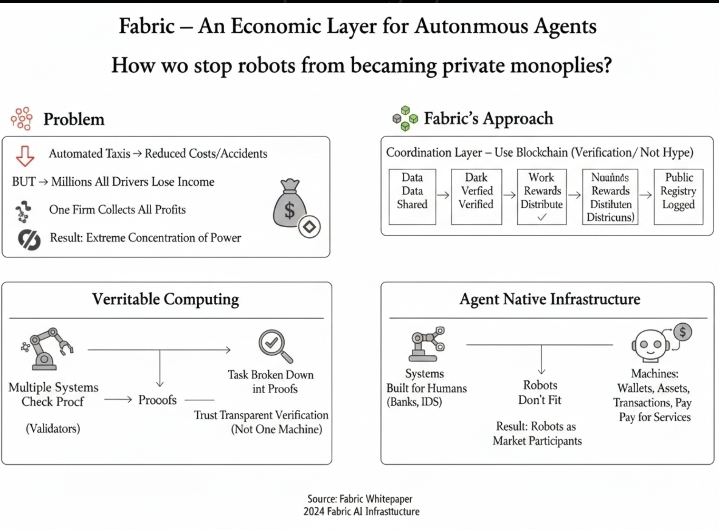

Fabric Protocol, at least from how I understand it, is trying to approach AI production from a more distributed angle. Instead of assuming that training and inference must live inside giant corporate silos, it leans into decentralized compute coordination. Let machines and node operators contribute. Let incentives align around actual workload distribution. Let production scale horizontally instead of vertically.

That idea isn’t new in crypto. We’ve heard versions of it in storage networks, GPU marketplaces, and distributed rendering. But applying it directly to AI production hits differently. Because AI isn’t just another workload. It’s quickly becoming the workload.

I remember when DeFi was the big coordination experiment. Then NFTs. Now it feels like compute is the quiet battleground. Everyone wants AI exposure, but very few talk about who owns the pipes.

Fabric’s framing touches something deeper than token utility. It’s about whether AI becomes an extension of Web2 infrastructure or whether crypto can genuinely carve out a parallel production layer. And I’m not fully convinced either way yet.

On one hand, decentralized AI production sounds almost inevitable. Training costs are enormous. Inference demand is exploding. Distributing compute across global participants seems economically rational. Idle GPUs sitting in basements could theoretically contribute. Smaller teams could access resources without negotiating enterprise contracts.

On the other hand, AI training at scale is brutally complex. Latency matters. Bandwidth matters. Coordination overhead is real. Centralized systems exist for a reason. Sometimes efficiency wins over ideology. I’m not sure we talk about that enough in crypto.

Fabric Protocol seems to be navigating that tension. It doesn’t just shout “decentralized AI” and call it a day. It’s trying to create structured incentives for reliable compute contributions. That’s harder than it sounds. Anyone who’s watched early decentralized networks struggle with uptime and quality knows the pain.

What intrigues me most is the economic layer. If AI production becomes tokenized, what exactly are we pricing? Compute cycles? Model training sessions? Inference calls? Data contribution? All of the above? And who captures the upside if models trained on decentralized infrastructure become highly valuable?

Maybe I’m overthinking it, but this feels like a new kind of mining. Not hash power chasing block rewards. But compute power feeding intelligence systems. Instead of securing ledgers, you’re powering cognition. That shift is subtle, but it changes the narrative.

There’s also a governance angle that doesn’t get enough airtime. If AI production moves into decentralized networks, who decides what gets trained? What datasets are acceptable? What ethical constraints exist? Centralized AI has its own bias and control issues. But decentralized AI could fragment responsibility in ways we’re not ready for.

I felt something similar during early DAO experiments. We were excited about “community governance,” then quickly realized coordination at scale is messy. Fabric and projects like it may eventually face similar friction. Distributed compute is one layer. Distributed decision-making is another beast entirely.

At the same time, there’s something deeply crypto-native about this struggle for AI production control. Bitcoin challenged control over money issuance. Ethereum expanded that to programmable finance. Now the question is whether intelligence itself becomes infrastructure that a few entities gatekeep.

And that’s where it stops being just another altcoin narrative.

The market, of course, will reduce all of this to price action. It always does. Tokens tied to AI infrastructure will pump on headlines and retrace when sentiment cools. I’ve been around long enough to know that cycles distort long-term vision. But sometimes beneath the volatility, real structural shifts are happening quietly.

I can’t say for certain that Fabric Protocol will be the framework that meaningfully decentralizes AI production. It might struggle. It might pivot. It might get outcompeted by centralized providers that simply execute faster. That’s the uncomfortable truth.

Still, I find myself drawn to the attempt.

Because every major shift in crypto started as an awkward, imperfect prototype. Bitcoin nodes running on home computers. Early Ethereum clients constantly desyncing. DeFi contracts getting exploited while we learned in public. None of it was clean.

If AI is going to integrate into everything, from finance to content to governance, then the fight over its production layer matters. It determines whether access remains permissioned or becomes programmatic. Whether power concentrates further or diffuses, even slightly.

Sometimes I wonder if decentralizing AI production is less about winning against big tech and more about building optionality. Creating parallel rails so no single entity holds all the switches. Even if decentralized networks never fully replace centralized ones, existing as a credible alternative changes incentives.

And maybe that’s enough.

I don’t have a neat conclusion here. Honestly, I’m still trying to figure out how serious this shift is. Part of me thinks we’re early to a fundamental restructuring of digital infrastructure. Another part thinks crypto might be overestimating its leverage against hyperscale cloud giants.

But I keep coming back to that laptop moment. The noise. The heat. The feeling that something powerful didn’t have to live behind someone else’s API key.

If Fabric Protocol and others can capture even a fraction of that independence at scale, the conversation about AI control might look very different in a few years.

For now, I’m watching. Running small experiments. Reading whitepapers slower than I used to. And asking myself the same question that’s been following crypto since the beginning.

Who actually owns the systems we’re building?

I don’t think we’ve answered that yet.