Artificial intelligence is becoming deeply integrated into how we make decisions, analyze information, and automate systems.

From trading strategies to governance proposals and automated research, AI is increasingly asked to interpret complex data and deliver conclusions that humans and machines rely on.

Yet beneath this rapid adoption lies a quiet problem that few people talk about: AI verification often fails before it even begins.

Not because the models are inaccurate, but because they are evaluating different interpretations of the same input.

When two AI systems analyze a piece of text, they may focus on different assumptions, interpret context differently, or extract different claims. One model may emphasize nuance while another prioritizes literal meaning. Even when both models are technically correct, their conclusions can diverge simply because they are not solving the exact same problem. This creates a hidden layer of inconsistency. Verification becomes less about truth and more about overlapping interpretation.

This is where Mira introduces a subtle but transformative idea: canonicalization before verification.

Instead of sending raw AI output directly to verification models, Mira first restructures the information into a standardized form. It extracts core claims, clarifies assumptions, aligns context, and removes ambiguity. The goal is not to change the meaning of the content but to ensure that every verifying system evaluates the same structured problem. In simple terms, it ensures everyone is answering the same question before deciding whether the answer is correct.

This step may seem technical, but its implications are profound. When verification models operate on standardized inputs, agreement becomes meaningful. Consensus reflects shared evaluation rather than coincidental overlap in interpretation. Disagreement becomes informative rather than confusing. The verification process shifts from subjective alignment to structural clarity.

Without canonicalization, AI verification resembles a group of judges reading slightly different versions of the same case. Their rulings may differ not because they disagree on facts, but because the facts presented to them are framed differently. With canonicalization, every judge receives the same structured case. Now their agreement reflects truth, not interpretation drift.

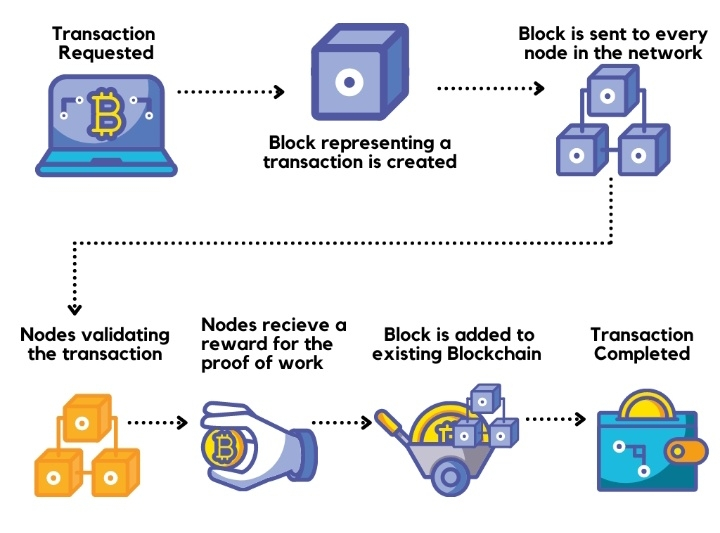

In decentralized ecosystems, this distinction matters enormously. Web3 systems depend on verification rather than trust. Smart contracts execute based on defined inputs. Governance decisions may rely on research summaries. Automated agents may trigger financial actions. If AI outputs feed these systems without structural alignment, small interpretive differences can lead to inconsistent outcomes.

Imagine AI-driven trading analysis feeding on-chain strategies. If verification models interpret risk assumptions differently, execution decisions may vary. Imagine DAO governance proposals analyzed by AI — if the models interpret the same policy differently, voters may receive conflicting conclusions. Imagine automated research agents providing data to smart contracts — if the context is misaligned, execution may occur based on incomplete understanding.

Canonicalization addresses this risk at the source.

By extracting claims, clarifying assumptions, and aligning context before verification, Mira transforms AI outputs from loosely interpreted responses into structured knowledge units. Verification becomes more reliable because ambiguity is reduced before evaluation begins.

This shift reflects a deeper philosophy: AI outputs should not be trusted simply because they are generated. They should be trusted only after structural alignment and verification.

In the broader evolution of AI and Web3, trust layers may become as essential as consensus layers. As AI becomes embedded in financial systems, governance processes, and autonomous agents, reliability must be provable. Transparency must replace opacity. Verification must replace assumption.

Canonicalization is not about making AI smarter. It is about making AI understandable, comparable, and verifiable.

Emotionally, this evolution reflects a shift in how humans relate to machine intelligence. For years, people have been asked to trust algorithmic decisions they cannot see or fully understand. Canonicalization and verification restore clarity. They allow users to see the structure behind conclusions, to understand the assumptions being evaluated, and to trust results because they are verifiable rather than mysterious.

As AI continues to expand into decentralized systems, the challenge will not be generating answers. AI already excels at that. The challenge will be ensuring those answers can be trusted across systems, contexts, and decisions.

Mira’s approach highlights an important truth: verification is only meaningful when everyone is verifying the same thing.

By aligning structure before judgment, canonicalization transforms AI outputs from interpretations into verifiable knowledge.

And in a world increasingly shaped by autonomous systems, verifiable knowledge is not a luxury.

It is the foundation of trust.

#MarketRebound #AxiomMisconductInvestigation #JaneStreet10AMDump

#StrategyBTCPurchase