If $MIRA created a multi-layer Truth Derivatives market where institutions hedge exposure to specific AI model failure domains, would AI risk become a structured financial product?

Last week I was testing an AI writing assistant before submitting a draft. The interface froze for half a second, refreshed, and silently rewrote one paragraph. No warning. No version diff. Just a subtle shift in tone and one statistic slightly “smoothed.” Nothing catastrophic. But it felt off. Not because it failed — because I had no way to price that failure.

That small friction exposed something structural. We treat AI outputs as deterministic utilities, yet their risk profile is probabilistic and domain-specific. An AI model might be 99% reliable in grammar correction and 82% reliable in financial reasoning under volatile data inputs. But contracts, insurance, and enterprise integrations treat “AI” as a single surface. There is no layered market that isolates exposure to distinct failure domains.

The misalignment is this: AI risk is bundled, while institutions manage risk in slices.

The mental model that helped me frame this is weather derivatives. Farmers don’t hedge “weather.” They hedge rainfall variance, frost frequency, wind-speed thresholds. Risk is decomposed into measurable triggers. A wheat farmer in Iowa doesn’t need protection against hurricanes in Florida; they need protection against a three-week drought in July.

Now apply that logic to AI systems.

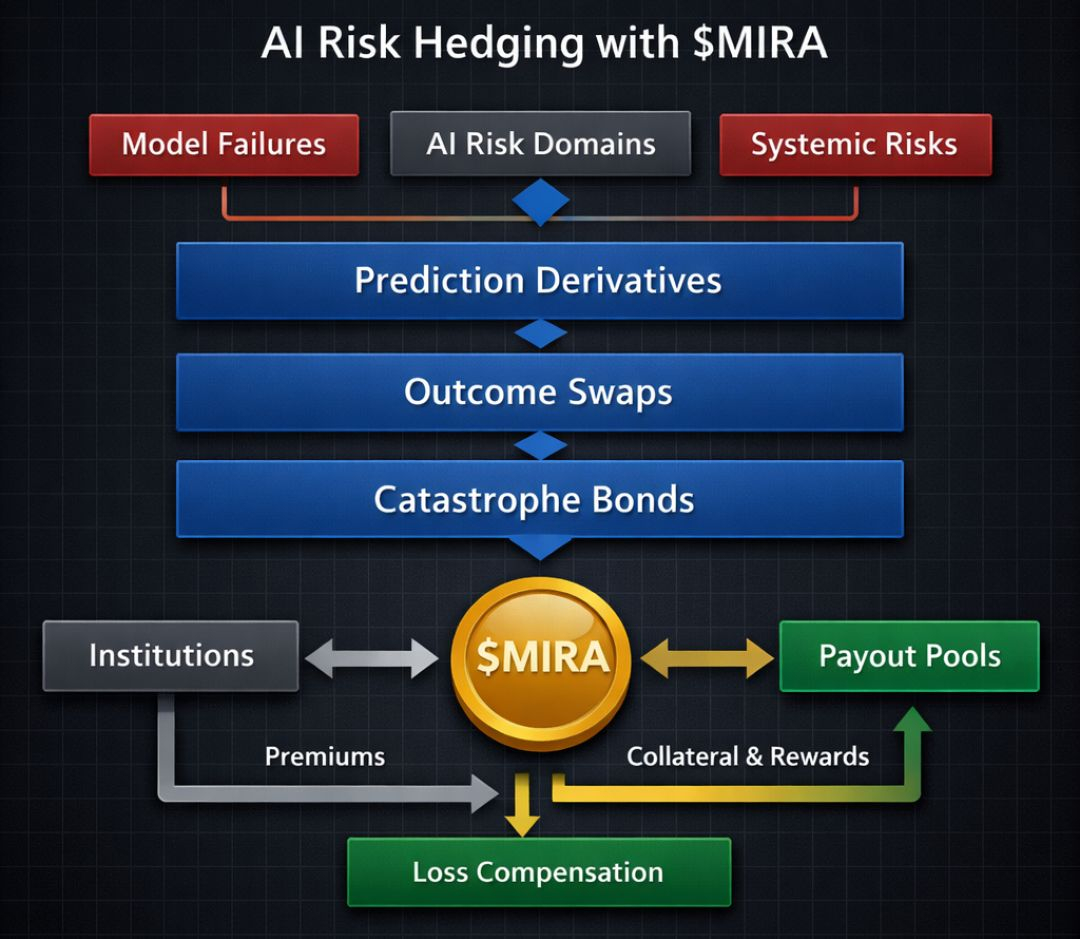

An enterprise using AI for legal document analysis doesn’t care about hallucination risk in casual chat. They care about citation integrity failure rates under ambiguous statutory interpretation. A logistics firm using AI forecasting cares about error amplification during supply shocks, not poetic phrasing drift. Risk domains are contextual, but our financial tooling around AI treats it as monolithic.

Blockchains like Ethereum (ETH), Solana (SOL), and Avalanche (AVAX) demonstrated how programmable settlement layers can decompose financial exposure into composable primitives — options, perps, credit markets. The innovation wasn’t just faster execution. It was modular risk expression. Yet AI risk today has no equivalent modular layer.

If $MIRA were to build a multi-layer Truth Derivatives market, the objective wouldn’t be to speculate on AI narratives. It would be to financialize specific model failure domains as structured contracts.

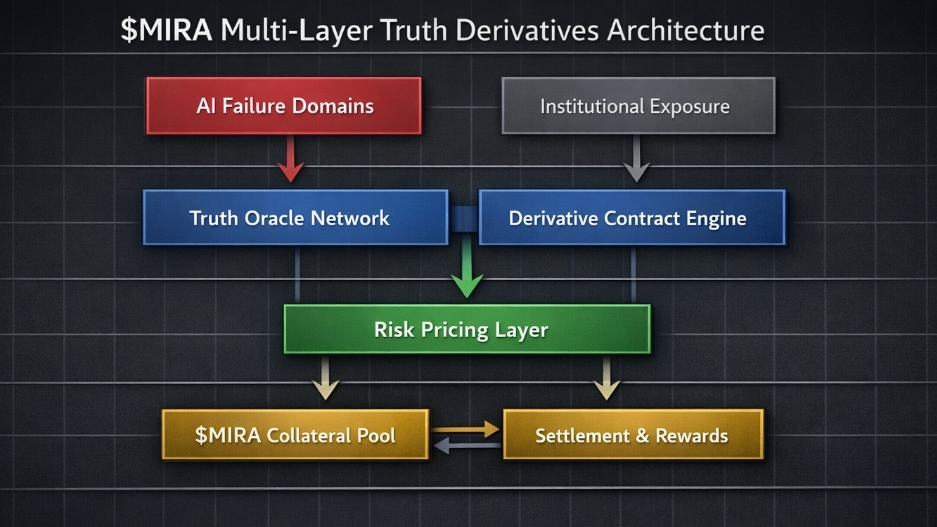

The architectural premise would rest on three layers:

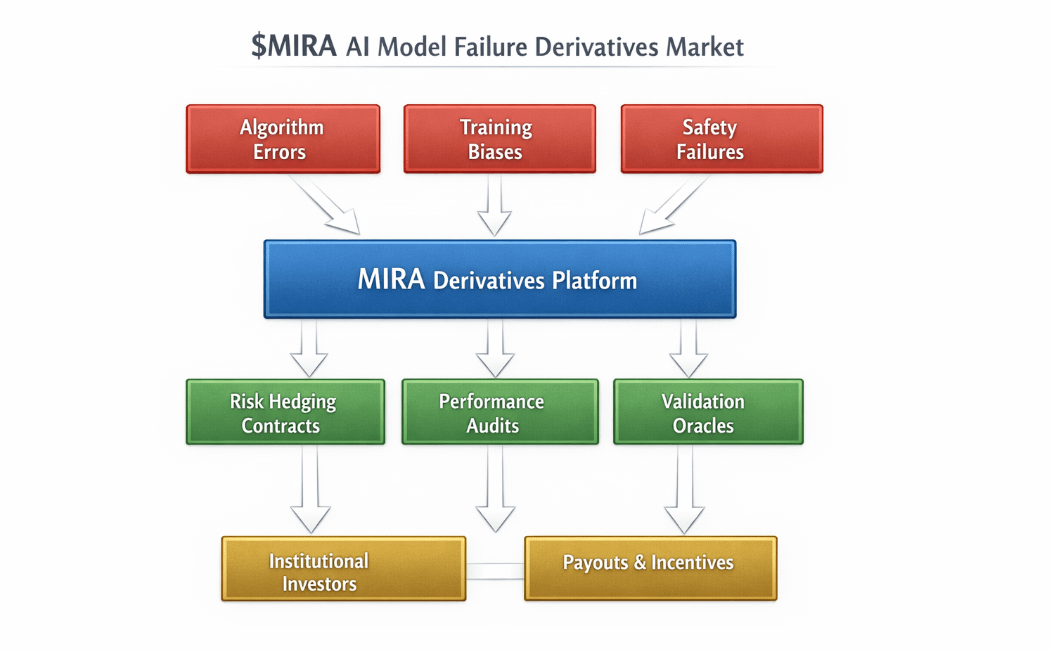

First, a data validation layer that continuously benchmarks AI models against domain-specific truth sets. These aren’t generic accuracy metrics, but curated datasets tied to real-world verticals — medical diagnosis error bands, financial miscalculation frequency, contract clause misclassification rates.

Second, a risk tokenization layer where each failure domain is represented as a derivative instrument. For example: “Legal Citation Deviation Index (LCDI)” or “Macro Forecast Error Spread (MFES).” These contracts would settle based on statistically verified deviation thresholds over defined time windows.

Third, a liquidity coordination layer where institutions hedge exposure by taking positions opposite their operational AI usage. If a bank relies heavily on AI-driven underwriting, it could hedge against “Underwriting Bias Variance” contracts. If model performance degrades beyond a set tolerance band, the derivative pays out.

The token utility of MIRA in this structure would not revolve around passive holding. It would likely serve as collateral and staking weight within validation pools. Validators who benchmark AI outputs stake MIRA and earn rewards tied to accurate reporting of deviation metrics. Incorrect or manipulated reporting would trigger slashing, creating economic pressure toward integrity.

Imagine a simple embedded visual: a three-column table. The first column lists AI failure domains (e.g., factual hallucination rate, numerical miscalculation, domain drift). The second column shows corresponding derivative contracts with measurable thresholds (e.g., >2% deviation over 30 days). The third column shows who hedges them (legal firms, trading desks, insurers). The table reveals that AI risk is not abstract — it is segmentable, measurable, and hedgeable.

Value capture would emerge from settlement fees, staking participation, and issuance decay mechanisms. Suppose total MIRA supply is capped at a fixed maximum with emission rewards decaying annually by a programmed percentage. Validators must maintain a minimum stake to participate in specific high-sensitivity domains, creating tiered access. Institutions hedging risk would need to acquire $MIRA for margin requirements, embedding demand into operational usage rather than narrative cycles.

The incentive loop becomes circular but grounded. Institutions demand hedging → liquidity providers price risk → validators benchmark performance → accurate data improves derivative pricing → developers receive performance signals.

Developers, in turn, would behave differently. If their models are publicly benchmarked in derivative markets, reputational risk becomes financialized. A model with consistently widening “Truth Spread” would raise hedging costs for its users. Developers would have economic motivation to tighten error bands, not because of PR pressure, but because integration costs become measurable.

User incentives shift too. Enterprises selecting AI vendors would compare not just feature sets, but derivative spreads. An AI model with lower volatility in its failure index would command institutional preference, similar to how credit ratings influence bond yields.

But the structure carries risks.

The primary assumption is that failure domains can be objectively measured without manipulation. If truth datasets are biased or incomplete, derivative settlements become distorted. There is also the risk of reflexivity: if markets price high failure probability, institutions might over-hedge, amplifying perceived instability even if underlying performance is stable. Liquidity fragmentation across too many micro-domains could also reduce efficiency.

Governance would need adaptive mechanisms. Domain creation proposals might require community approval and minimum liquidity commitments before activation. Sunset clauses for inactive derivatives could prevent clutter. Transparency in benchmarking methodology would be non-negotiable.

The deeper economic implication is that AI systems would no longer be treated as black-box utilities. They would become financially audited performance entities. Risk would move from implicit trust to explicit pricing.

In that environment, $MIRA would not represent belief in AI progress. It would represent participation in AI accountability infrastructure. A token embedded not in narrative speculation, but in the collateralization of measurable uncertainty.

When AI risk becomes structured, it stops being philosophical. It becomes a line item. And once uncertainty can be sliced, priced, and hedged, it ceases to be abstract and starts behaving like every other mature market exposure.#Mira @Mira - Trust Layer of AI