I still remember the first time a robot made me uneasy without doing anything “wrong” in the obvious sense. It wasn’t a crash or a dramatic malfunction. It was a small drift, a slightly wider turn, a movement that landed just a little outside the pattern everyone had gotten comfortable with. Nothing broke. Nobody screamed. But the air in the room changed anyway. People stopped joking. Someone took a step back like their body understood the risk before their brain could explain it. And in that quiet moment, the scary part wasn’t the machine. It was the question that showed up behind it, almost like a shadow: if this goes bad, who’s actually responsible?

That question follows robotics everywhere once you leave the controlled demos and step into real environments where the stakes have weight. A robot in a warehouse doesn’t just “run software.” It shares space with humans who are tired, distracted, rushing, carrying things, trying to finish a shift. A robot in a hospital isn’t just a navigation problem. It’s a hallway full of urgency, liability, and people who can’t afford delays. A delivery robot on a sidewalk isn’t a cute gadget. It’s an object that can block a wheelchair ramp or spook a child on a bike or create a chain reaction of little conflicts nobody planned for. Most people don’t realize how quickly “cool tech” turns into “real responsibility” the second a machine’s decisions touch the physical world.

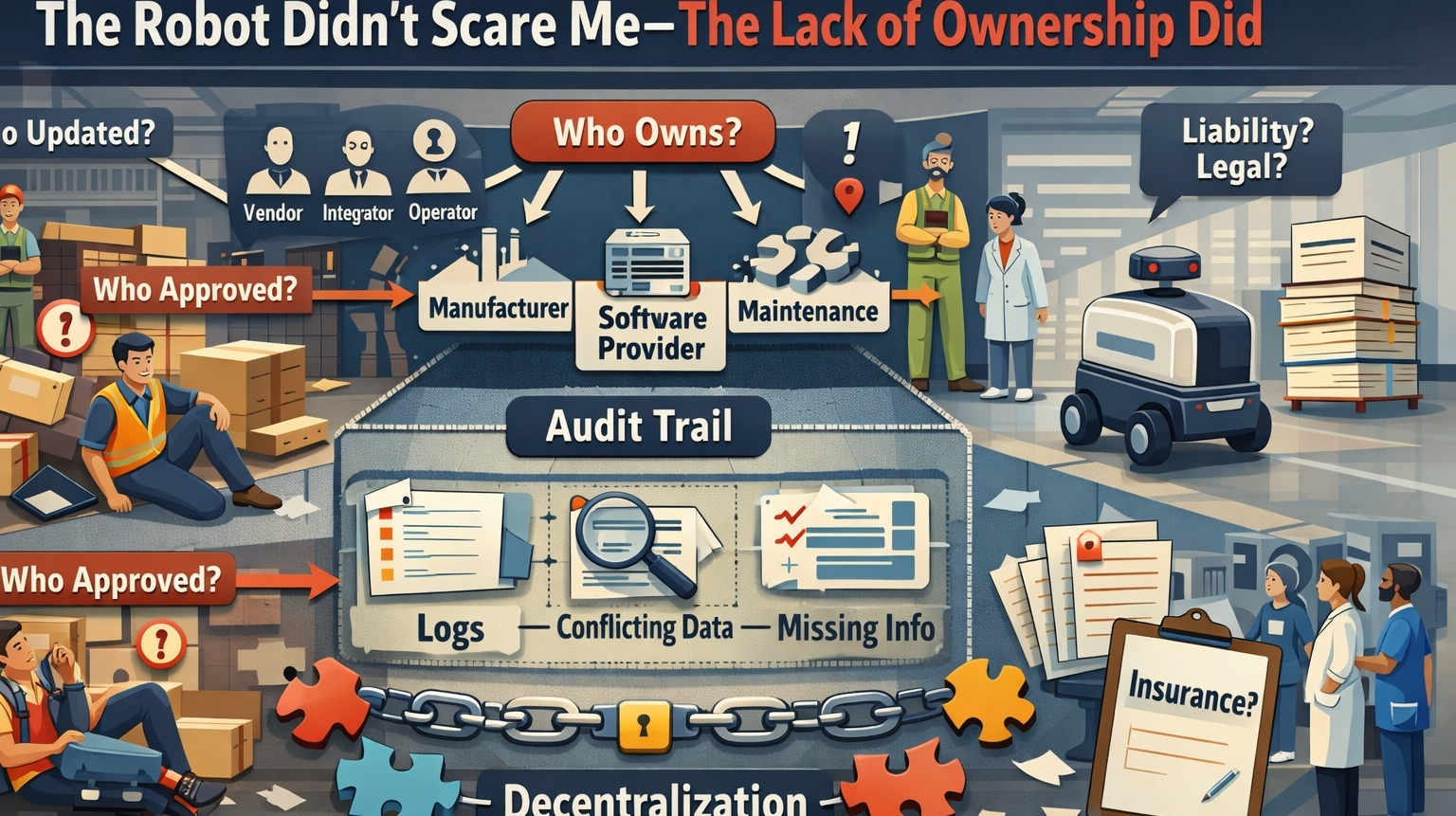

And this is why I keep coming back to the phrase “accountability before decentralization.” Not because it sounds clever, but because it feels like something you learn the hard way. Decentralization is the shiny idea people like to reach for when they want to sound forward-thinking. It’s the promise of freedom, resilience, ownership spread out, no single gatekeeper controlling the future. I get why it’s attractive. I really do. But the truth is, when a robot fails in the real world, decentralization can also feel like fog. It can feel like the system was built to make responsibility harder to grab.

If you’ve ever had to untangle a messy incident with a machine, you know what I mean. You don’t get one clean story. You get fragments. You get dashboards that don’t match. You get logs that don’t line up because clocks drift or events weren’t captured or something overwrote history to save storage. You get teams speaking in careful language because everyone’s quietly thinking about liability. You get “we’ll need to escalate that request” or “we can’t share that because it’s proprietary” or “that data lives with the vendor.” And meanwhile, the physical world is sitting right there with the consequences. Someone got hurt. Something got damaged. Work stopped. And the people impacted don’t want a philosophy lecture. They want the truth.

That’s what makes the “Fabric” conversation interesting to me, even with all the baggage that comes with anything that smells like blockchain. The way it’s being framed, at least in the more serious takes I’ve seen, isn’t just “let’s decentralize robots because decentralization is cool.” It’s more like: if robots are going to be operated, updated, maintained, insured, and coordinated across multiple parties, then we need a shared accountability layer that doesn’t depend on one company’s internal logs or one vendor’s cloud dashboard. The focus becomes identity, verifiable records, governance rules that can be audited, and a system where you can reconstruct what happened without relying on whoever has the most power in the room.

And honestly, that doesn’t feel like hype to me. It feels like someone finally staring at the boring, painful parts of robotics and admitting those parts are the whole game. Because most robotics teams don’t fail on the “robot can’t move” problem. They fail on the “we can’t manage change” problem. They fail on the “we don’t actually know what version is running where” problem. They fail on the “we can’t prove what was true yesterday” problem. The robot becomes a mirror reflecting the organization’s ability to handle responsibility, and a lot of organizations are not as ready as they think they are.

But I also don’t want to pretend accountability is easy just because you say the word confidently. You can log everything and still be unsafe. You can build a beautiful ledger and still have garbage data going in. You can prove a robot did something and still not prevent it from doing it again. If a robot is compromised, it can sign lies. If a sensor is wrong, you can preserve the wrong story forever. If governance is captured by whoever has the most money or influence, “decentralized” becomes a costume. And if you start mixing economic incentives into safety-critical behavior, you can create weird pressures that make people hide incidents instead of surfacing them early.

What gets me, though, is this: accountability isn’t just about blame. It’s about making reality non-negotiable. When something goes wrong, you need the ability to answer questions that hurt, without turning the truth into a bargaining process. Who pushed the update? Who approved it? What changed in the model or the navigation policy? Was the robot running a certified configuration or some half-deployed rollout? Did someone override a safety constraint because the system was being “too conservative” and it slowed down operations? When you can’t answer those questions, you’re not just dealing with a technical issue. You’re dealing with a trust collapse.

And trust in robotics is fragile in a way people underestimate. One public failure doesn’t just damage one product. It makes people suspicious of the whole category. It makes workers resentful because they feel like they’re being asked to take physical risks for someone else’s efficiency story. It makes managers defensive because they fear downtime and lawsuits. It makes regulators more aggressive because they can’t tolerate systems that can’t explain themselves. And it makes ordinary people feel like the future is being pushed onto them without anyone agreeing to carry the consequences when it bites.

This is where decentralization gets complicated, because decentralization is often sold like it removes single points of failure. But in robotics, a “single point of responsibility” is sometimes the thing that keeps people safe. When something starts behaving dangerously, you don’t want a vote. You don’t want a debate about permissions. You want a stop. You want a clear right to intervene, a clear chain of authority, and an incident response culture that treats robotics like critical infrastructure, not like an app you can patch casually and move on from.

So the version of “accountability before decentralization” that feels real to me is almost painfully practical. Before you distribute control, you define control. Before you let multiple parties touch the same fleet, you define who can intervene and how quickly. Before you talk about robot economies and autonomous labor markets, you prove you can investigate a real incident without the story falling apart into “not us.” Before you chase scale, you build the kind of memory that doesn’t flinch when lawyers show up.

Because that’s the part nobody likes to say out loud: the real test of robotics isn’t whether the robot can do the job on a good day. The real test is whether the system around it can handle a bad day without turning into chaos. A robot that works 99% of the time is still a problem if the 1% is untraceable, unexplainable, and impossible to assign responsibility for. People can forgive mistakes. They struggle to forgive systems that feel designed to dodge accountability.

I think about this sometimes in small, ordinary scenes, because that’s where the future actually arrives. A robot pauses in a hallway and starts blocking traffic. A worker tries to go around it and mutters something sharp. Someone else is late because the machine decided it wasn’t safe to move forward. There’s no dramatic failure, just friction. And if nobody can explain what’s happening or who can fix it, people don’t feel impressed. They feel trapped. They feel like the machine has more authority over their time and space than they do, and nobody can tell them where to direct their anger.

That’s what accountability protects against. Not just injuries and lawsuits, but that creeping feeling that technology is spreading faster than responsibility. If Fabric, or anything like it, can make robotics deployments more auditable, more traceable, and harder to “hand-wave” when something goes wrong, then it’s addressing something that actually matters. Not the headline dream, but the human reality.

And I’ll be honest: I don’t care how decentralized robotics becomes if it can’t tell the truth. I don’t care how elegant the governance looks if the system can’t produce a coherent timeline after an incident. I don’t care how big the vision is if responsibility dissolves the moment there’s real harm. If we’re going to put autonomous machines into shared spaces with human bodies and human lives, the minimum price of entry is accountability that holds up under pressure.

Decentralization can come later. It can come when the backbone exists, when the rules are clear, when the emergency brakes are real, when the evidence can’t be rewritten, and when people stop treating responsibility like something they can architect away. Because in the end, robots aren’t just machines moving around. They’re decisions moving around. And if nobody owns those decisions when they hurt someone, the future won’t feel innovative. It’ll feel careless. And people don’t forget careless. They carry it. They talk about it. They build policies around it. They vote with their fear.

The future of robotics won’t be decided by how advanced the machines are. It’ll be decided by whether the humans behind them are willing to be accountable—plain, visible, undeniable accountability—before they try to distribute power and call it progress.

#ROBO @Fabric Foundation $ROBO