Earlier this week I was watching a verification round when one fragment refused to close. Nothing looked broken. The validator mesh was still moving, but the round time kept climbing while confidence vectors were still forming.

Earlier that morning another fragment had sealed almost instantly.

fragment_id: c-6104-j

round_time_ms: 812

quorum_weight: 0.94

dissent_weight: 0.02

verified: true

A few minutes later the round that caught my attention looked very different.

fragment_id: c-6118-j

round_time_ms: 68,412

quorum_weight: 0.82

dissent_weight: 0.16

verified: true

I actually refreshed the panel once just to make sure the round time wasn't stuck.

Both fragments produced the same certificate.

verified: true

But the work required to reach that certificate was completely different.

That difference is easy to miss if you only look at the certificate itself. Mira’s verification output is binary. A fragment either passes verification or it doesn't.

The proof record tells a more detailed story.

Validators aren't only staking tokens behind a result. They're also doing the inference work required to produce that result in the first place.

Staking is usually where the description ends. Validators lock $MIRA, accurate verification earns rewards, and incorrect verification risks losing stake. That part is real, but it is only half of what happens during a verification round.

Before any stake commits, validators run inference on the fragment. Confidence vectors form as models evaluate the claim. Only after that evaluation stabilizes does the validator attach stake behind the outcome.

Every verification round therefore carries two costs at the same time. One is economic because validators place $MIRA behind their decision. The other is computational because the models must process the fragment before deciding how confident they are.

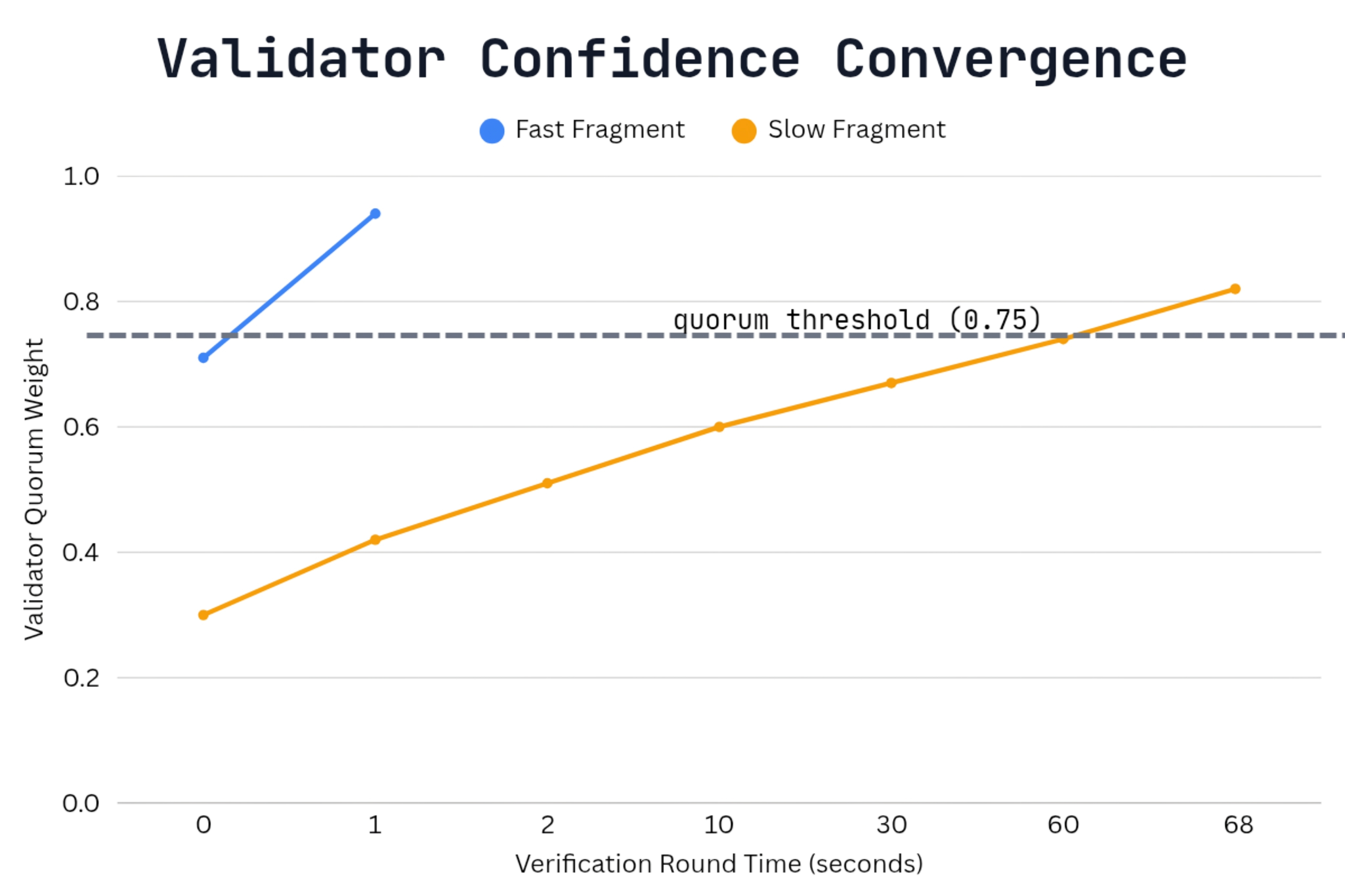

Some fragments make that work almost invisible. A simple date reference or widely known fact stabilizes confidence vectors almost immediately. The validator mesh converges quickly because every model recognizes the claim.

Other fragments behave differently. Jurisdiction clauses, conditional language, and policy interpretation require deeper evaluation before validators reach the same conclusion. Confidence vectors move more slowly and the round takes longer before quorum forms and the validator set finally crosses threshold.

Both fragments still produce the same certificate. The network simply records that the fragment passed verification.

But the work required to reach that result is not the same.

Watching enough verification rounds makes the pattern difficult to ignore. Simple fragments settle almost instantly, while interpretive fragments take longer before the mesh stabilizes.

The proof records are mapping something else entirely.

Not a price curve.

A work curve.

Every fragment carries a different amount of computational effort before the validator mesh decides it is safe to seal the certificate. Yet the reward structure treats successful verification the same whether the round required a second of inference or a minute of it.

Validators inside the mesh feel that difference first. Some rounds are cheap. Others require sustained computation before the system settles.

$MIRA stake only really matters here if the reward curve eventually reflects the actual cost of that inference work. Right now both the cheap round and the expensive round pay the same reward, and validators quietly absorb the difference.

The real test is whether validators start avoiding expensive fragments when the reward curve does not compensate for the inference cost.

If that happens, the proof records will show it first.