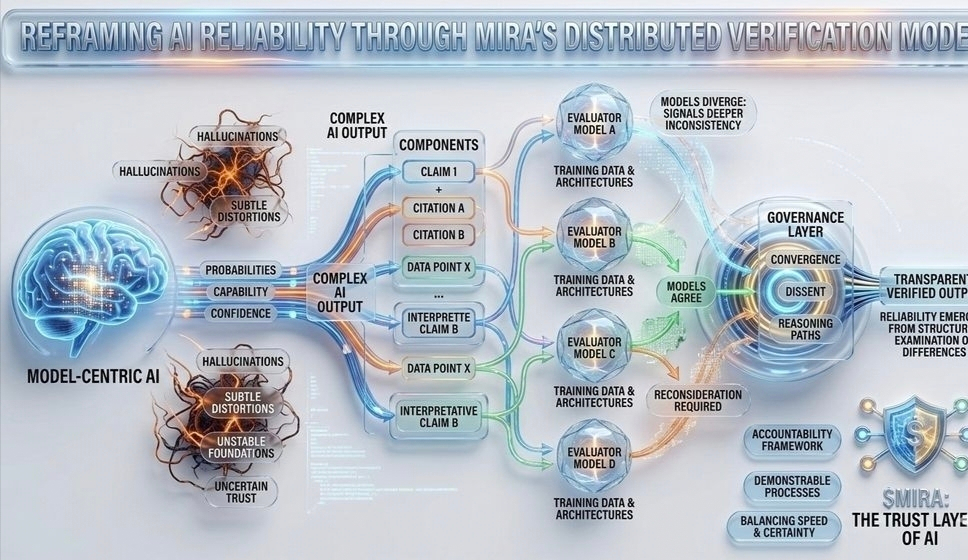

Most AI outputs look confident, but confidence isn’t proof. Mira Network tackles this overlooked trust gap. Modern AI can generate answers that feel certain, yet hallucinations, subtle bias, and unreliable outputs show how fragile that trust really is. In critical use cases—autonomous systems, finance, or decision-making—relying on unverified AI could lead to serious consequences.

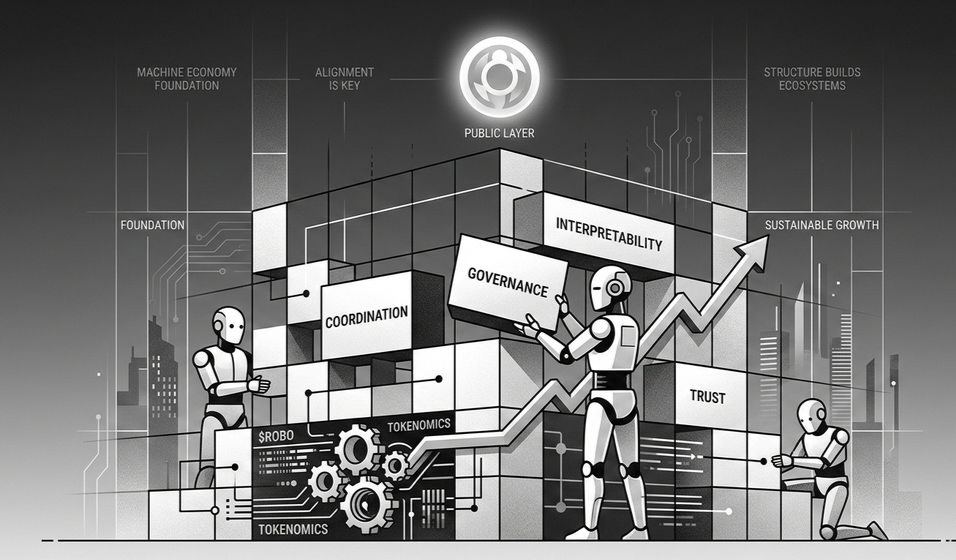

Mira Network approaches this challenge differently. Instead of trusting a single output, it breaks responses into verifiable claims distributed across independent AI models. Blockchain consensus ensures each claim is cryptographically validated, and economic incentives align participants to maintain honesty. Trust is no longer assumed—it’s earned.

Reflecting on this, it becomes clear that intelligence alone isn’t enough. Verification infrastructure is as important as algorithms themselves in a world moving toward autonomous systems. Mira Network quietly builds this foundation, showing that reliable AI isn’t just about what it can do—it’s about what can be proven.

If AI can’t prove its answers, how much would you rely on it?

@Mira - Trust Layer of AI $MIRA #mira #Mira