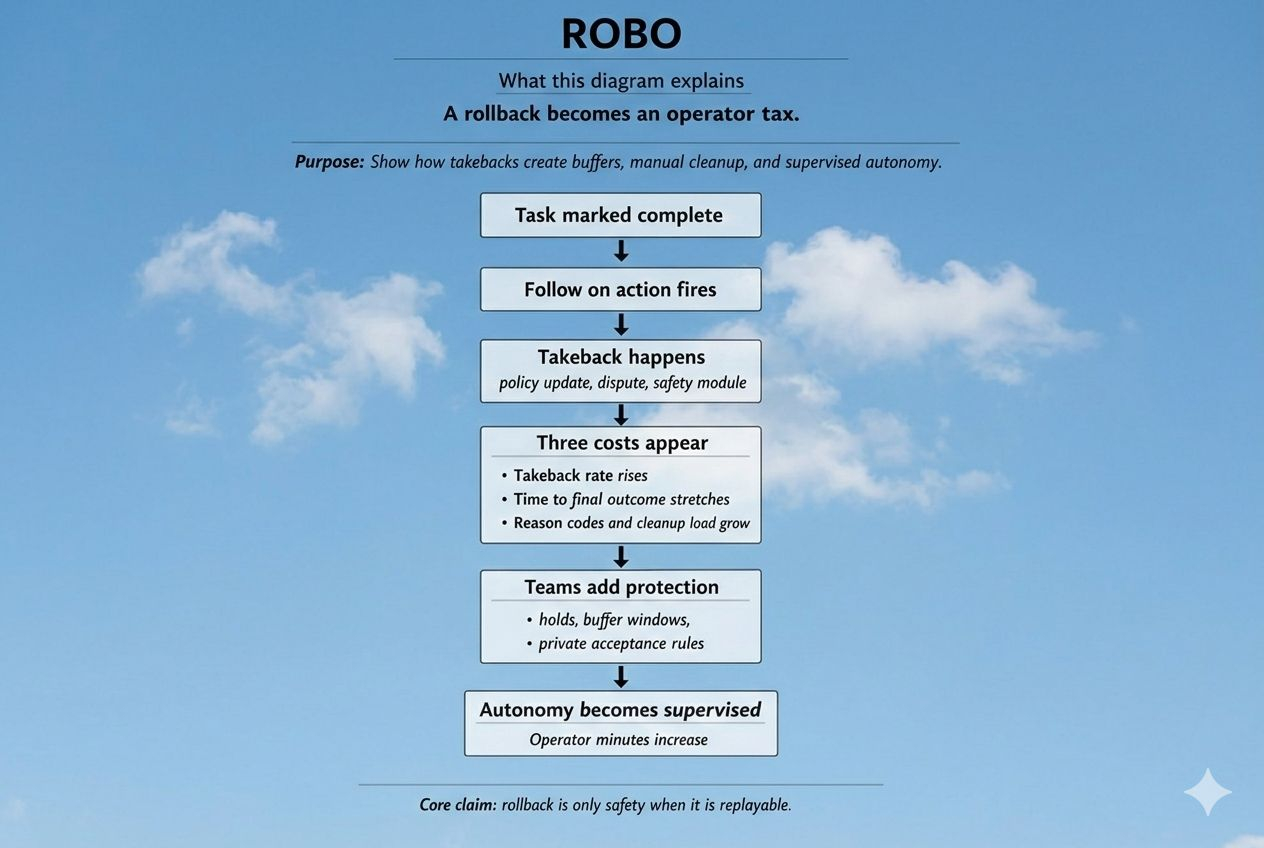

I learned to worry about rollbacks long after I learned to accept failure. Failures are loud and visible. Rollbacks are quiet. A task is marked complete, downstream actions trigger, permissions update — and then a late dispute, policy shift, or override reverses the outcome. By the time it’s undone, other systems have already moved.

That’s the real question around ROBO. Not whether agents can execute actions — but whether “undo” remains explainable once the network gets busy.

Rollback is only safety if it’s replayable.

In robotics and coordinated agent systems, undo is not abstract. It’s operational. A completed task activates the next step. An approval unlocks execution. A status change triggers cascading behavior. When that outcome is later reversed, the system doesn’t simply correct itself — it creates a gap that someone must reconcile.

And that someone is usually human.

I’m not here to crown or dismiss ROBO. No system proves itself until it survives ugly incident cycles. But real-world automation has patterns. When rollback is not legible and replayable, autonomy erodes. Not because the system stops — but because nobody trusts “done” without waiting.

There are three signals that expose the true cost of rollback:

1. Takeback Rate

How often does the system reverse completed outcomes?

Takebacks don’t need to be frequent to be damaging — they only need to be unpredictable. If reversals cluster around busy windows, disputes, or policy updates, participants adapt. They delay. They buffer. They add confirmation layers. Autonomy turns into supervised automation.

Healthy systems show shrinking, explainable takeback rates over time. Unhealthy systems create permanent defensive posture.

2. Time to Final Outcome

Speed is not time to first success. It’s time until success becomes irreversible.

A fast result that may be revoked later isn’t speed — it’s deferred ambiguity. In cascading environments, rollback can invalidate downstream actions that already triggered. Teams respond by inserting holds and private acceptance windows.

If tail latency to finality compresses after incidents, the system is learning. If buffers become permanent, humans are quietly re-entering the loop.

3. Operational Clarity

A rollback without a stable reason code isn’t safety — it’s mystery.

Mystery forces manual cleanup. Stable categories enable automation. When takebacks come with clear, consistent explanations and reconciliation time shrinks, automation deepens. When explanations drift and cleanup grows, babysitting replaces autonomy.

This is what markets often misprice. Reversibility is treated as safety by default. In production systems, rollback is only safe when it is legible, auditable, and fast to reconcile. Otherwise it is delayed failure with expanded blast radius.

Only at the end does the token enter the conversation. A token like $ROBO doesn’t prevent rollbacks. But it can fund the infrastructure that makes them safe — dispute resolution that closes quickly, auditable policy updates, stable reason codes, and tooling that allows deterministic replay.

If ROBO ever claims that value accrues from real-world agent usage, rollback must become cheap enough that teams don’t need to babysit it.

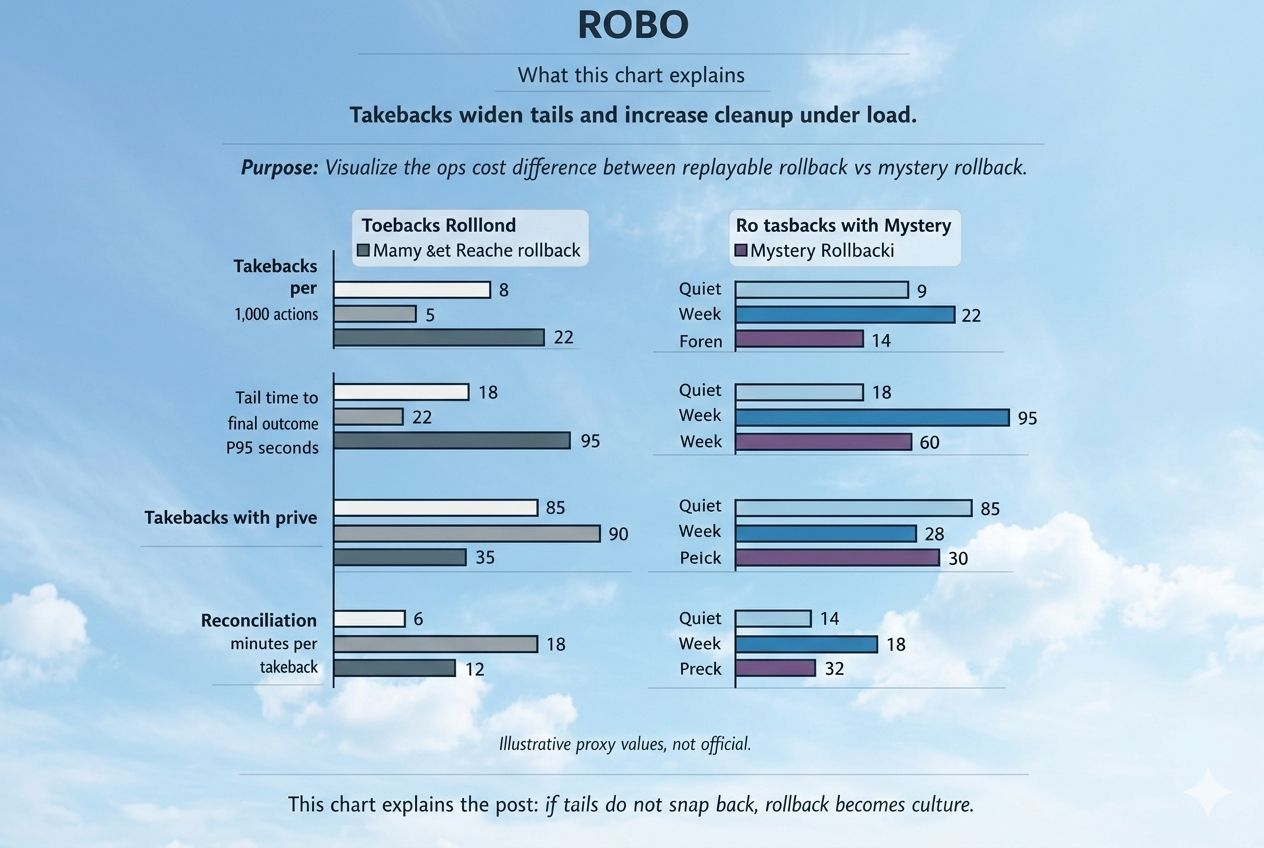

The simplest test is this:

Compare a quiet week with an incident week. Watch takeback rate, tail time to final outcome, reason-code stability, and reconciliation minutes.

In healthy systems, incidents leave scars that heal. Tails snap back. Cleanup gets faster.

In unhealthy systems, buffers remain, manual intervention grows, and autonomy slowly turns back into operations.

@Fabric Foundation #Robo $ROBO