A common mistake in AI product design is assuming reliability can be solved with one stronger model. That approach breaks down as soon as the output triggers financial, legal, or operational actions.

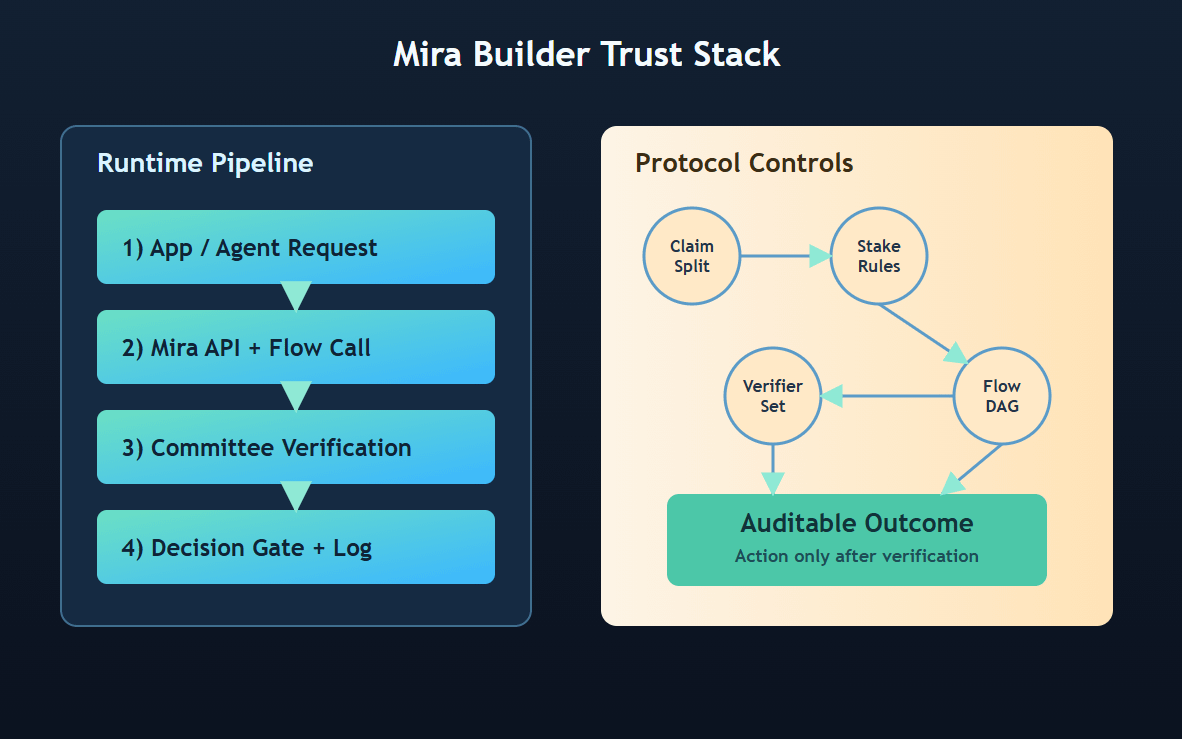

Mira's Flow architecture points to a better pattern: reliability-by-design. The docs distinguish Elemental flows (atomic units) from Compound flows (DAG-style pipelines), and compound logic can include reusable subflows plus tool nodes that call external APIs. This is important because verification becomes programmable. Teams can decide where claims are decomposed, where validator committees are invoked, how disagreements are handled, and when a response is blocked from execution.

The API layer (`https://api.mira.network/v1`) then acts as the bridge between product code and verification infrastructure. Instead of building isolated guardrails for each feature, teams can standardize trust policies across use cases. That is a major architectural advantage for agent-based systems, where one unchecked answer can propagate into many downstream actions.

Mira's whitepaper framing also reinforces that incentives are part of correctness: staking and delayed reward mechanisms are designed to discourage low-quality verification behavior over time. So the protocol is not only about model quality; it is about incentive quality.

Network tooling is still in beta in current docs, so stability and throughput remain key milestones. But the core thesis is strong: compound verification workflows can make AI outputs auditable before they become costly decisions.