I looked because I’ve slowly stopped trusting AI outputs by default.

Not in the loud, dystopian sense. In the quiet, operational sense. I’ve seen models hallucinate financial figures that looked legitimate. I’ve seen citations invented with convincing formatting. And I’ve seen confident answers built on weak assumptions pass review simply because they sounded certain. As AI systems move closer to autonomy, those errors stop being tolerable imperfections. They become liabilities.

That’s where Mira reframes the problem.

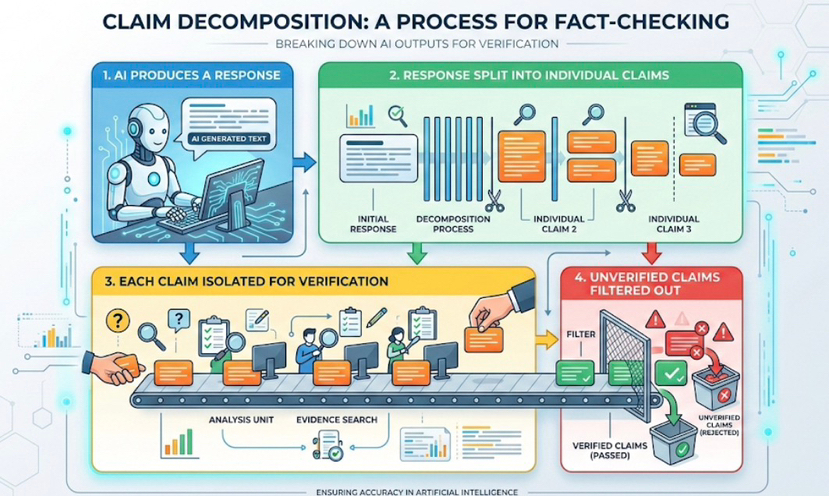

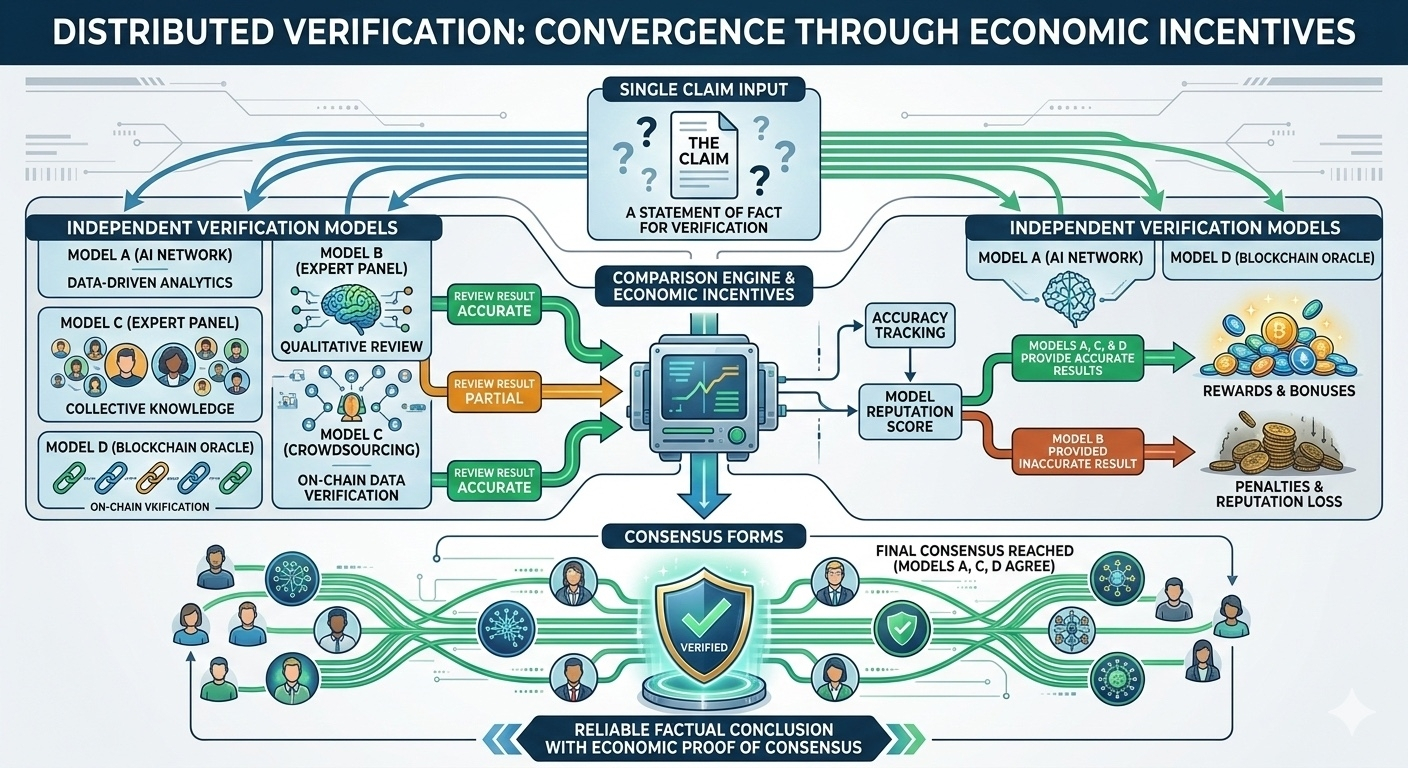

Instead of treating an AI response as a single, indivisible output, Mira decomposes it into smaller claims. Each claim is verified independently across a network of models. What survives isn’t authority or model size, but convergence shaped by economic incentives.

This subtle shift changes how trust works.

We’ve normalized AI as a black box. It speaks, and we decide whether to believe it. Mira treats outputs as assertions that must earn validity. The unit of trust isn’t the model—it’s the claim. That feels less like generation and more like audit.

I tried to pressure-test this idea mentally.

Imagine an AI summarizing market data or regulatory language. Normally, one hallucinated number can distort the entire conclusion. With Mira’s approach, numerical claims are cross-checked by independent agents. Not because one model is “better,” but because multiple incentivized nodes converge on the same result.

What stood out to me is that Mira isn’t trying to make AI smarter.

It’s trying to make AI accountable. Larger models still hallucinate. Better training still misfires. Intelligence alone doesn’t solve reliability. Verification adds discipline where scale fails.

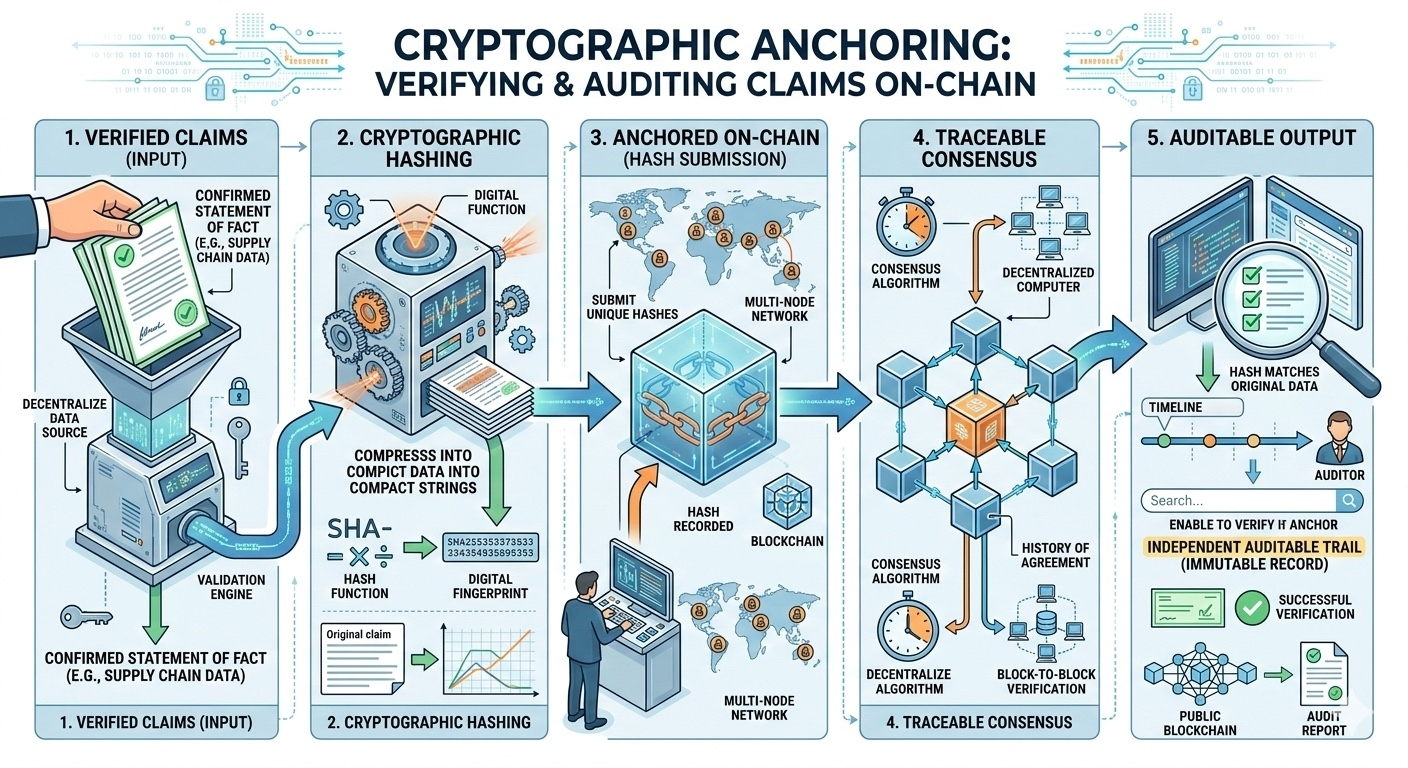

And the blockchain layer isn’t decorative.

Verified claims are cryptographically anchored, leaving a visible, traceable record of consensus. You’re not asked to trust that something was checked—you can see that it was. Trust becomes inspectable.

There are trade-offs. Verification introduces latency. Costs emerge. Speed competes with certainty. But in high-stakes environments, that friction might be the point.

Mira doesn’t promise perfect AI.

It promises AI you don’t have to take on faith.

@Mira - Trust Layer of AI | $MIRA | #Mira