When I first started thinking about Mira Network, I wasn’t thinking about technology in a cold or technical way. I was thinking about trust. I’m thinking about those small moments when you ask an AI something important and there’s that quiet doubt in your mind. Is this really correct? Can I rely on it? That feeling is where this entire project begins. Mira Network was created around a simple but powerful belief: intelligent systems should not just generate answers, they should be able to prove them. In a world where artificial intelligence is growing faster than our ability to question it, that belief feels deeply human.

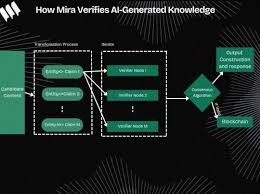

At its core, Mira Network works by refusing to accept a single answer as final truth. Instead of treating an AI response as one solid block of information, the system carefully breaks it apart into smaller claims. Each claim becomes something that can be checked, reviewed, and validated independently. I’m imagining it like taking apart a machine piece by piece to make sure every gear fits correctly. Once those claims are separated, they are distributed across a network of independent verifiers. These verifiers can be different AI models or specialized systems designed to evaluate accuracy. They’re not all thinking in the same way, and that diversity is intentional. The goal is to reduce the risk of shared blind spots or common biases.

The network then gathers the responses from these verifiers and looks for agreement. If multiple independent systems confirm a claim, it gains credibility. If disagreement appears, the claim can be flagged for further review. It becomes a process of structured doubt rather than blind acceptance. What I find meaningful is that the system doesn’t rely on one central authority to decide what is true. Instead, it uses collective validation. We’re seeing a shift from trust in a single source to trust in a process that can be inspected and repeated.

The design decisions behind Mira Network reflect careful thinking about incentives and responsibility. Verification is not just a technical step; it is something participants are encouraged to perform honestly. Operators who contribute to the network are expected to act accurately because their reputation and resources are connected to the quality of their work. If someone attempts to manipulate outcomes or act carelessly, the system is structured to detect and penalize that behavior. That alignment between incentive and honesty is not accidental. It comes from understanding that systems behave according to the motivations built into them.

Another important design choice is scalability. The team recognized early that not every piece of information requires the same level of scrutiny. If a casual conversation needs light verification, the process can remain efficient and fast. But if the content relates to sensitive areas like healthcare, finance, or governance, deeper verification layers can be activated. If everything were treated equally, the system would either become too slow or too shallow. Balancing depth and speed is part of what makes the architecture practical rather than theoretical.

When measuring progress, I’m not just looking at market excitement or token activity. What truly matters are usage patterns and reliability improvements. How many claims are being verified daily? How often do verified outputs remain accurate when reviewed later? How diverse are the verifier models participating in the network? These are the questions that reveal whether the system is actually reducing errors or simply creating noise. Adoption by developers is another strong indicator. If applications begin integrating Mira’s verification layer into their products, it shows that the solution solves a real problem.

At the same time, it would be unrealistic to ignore the risks. Any system built around verification can face coordination challenges. If too many verifiers rely on similar training data or similar assumptions, consensus might reflect shared bias instead of truth. There is also the economic dimension. If incentives are not perfectly balanced, participants might try to optimize for rewards rather than accuracy. Over time, that could weaken trust instead of strengthening it. These risks matter because the entire mission revolves around credibility. If credibility slips, the foundation shakes.

There is also the challenge of adoption. Even the best verification system only works if people choose to use it. Developers must see value in adding an extra layer of validation. Enterprises must believe that transparent verification improves their workflows. We’re seeing growing awareness around AI reliability, especially in critical sectors, but awareness does not automatically translate into integration. Education, community engagement, and real-world demonstrations will shape whether Mira Network becomes a foundational layer or remains a niche solution.

What inspires me most is the long-term vision. The team is not simply trying to patch AI errors. They’re imagining a future where verification becomes a natural part of how intelligent systems operate. If an AI produces a recommendation, you should be able to see how it was validated. If a decision impacts lives, there should be a traceable path explaining why it was accepted. That transparency could reshape public confidence in advanced technologies. It becomes less about trusting a machine blindly and more about trusting a structured process.

I’m also thinking about how this approach encourages collaboration instead of competition among models. Instead of one dominant AI trying to prove superiority, multiple systems contribute to a shared validation framework. That cooperative structure feels healthier for long-term innovation. It acknowledges that no single model is perfect and that collective intelligence, when guided correctly, can reduce mistakes.

Over time, Mira Network could evolve beyond verifying text-based claims. As AI systems expand into robotics, autonomous agents, and decision-support platforms, the need for provable outputs will only grow. We’re seeing industries begin to demand higher accountability from automated systems. Regulatory bodies are asking tougher questions. Users are becoming more cautious. In that environment, a verification layer is not a luxury. It becomes essential infrastructure.

If the project continues to refine its verification models, strengthen economic alignment, and expand developer tools, it has the potential to shape how AI systems are trusted globally. Growth will likely come gradually. Trust is not built overnight. It is built through repeated proof of reliability. Each verified claim, each successful integration, and each transparent audit contributes to that foundation.

When I step back and think about it in simple human terms, Mira Network feels like an attempt to restore something fundamental. It acknowledges that intelligence without accountability creates uncertainty. By embedding verification into the lifecycle of AI outputs, it tries to transform uncertainty into measurable confidence. They’re not claiming perfection. Instead, they’re building a framework where imperfection can be detected and corrected.

And maybe that is the most human part of all. We don’t expect flawless systems. We expect systems that can admit mistakes and show us how they are resolved. If Mira Network succeeds, it will not be because it eliminated every error. It will be because it made errors visible and manageable. That shift alone could redefine how we interact with intelligent machines in the years ahead.

In the end, I’m left with a quiet sense of hope. Not because technology is advancing, but because accountability is being woven into its growth. If we continue demanding systems that can prove themselves rather than simply impress us, we move toward a future where innovation and responsibility grow side by side. Mira Network is one step in that direction, and its journey reminds us that trust, even in a digital world, is still a deeply human pursuit

#mira @Mira - Trust Layer of AI $MIRA