As artificial intelligence becomes embedded in finance, healthcare, research, and autonomous systems, reliability remains one of its biggest weaknesses. @Mira - Trust Layer of AI introduces a decentralized verification layer designed to reduce hallucinations, bias, and unchecked automation risks by transforming AI outputs into cryptographically verified information through blockchain-based consensus.

Rather than trusting a single model or centralized authority, Mira decomposes complex AI responses into smaller, verifiable claims. These claims are then validated across a distributed network of independent AI models, creating a trust-minimized system where accuracy is enforced through economic incentives and transparent consensus.

Core Mechanism and Technology

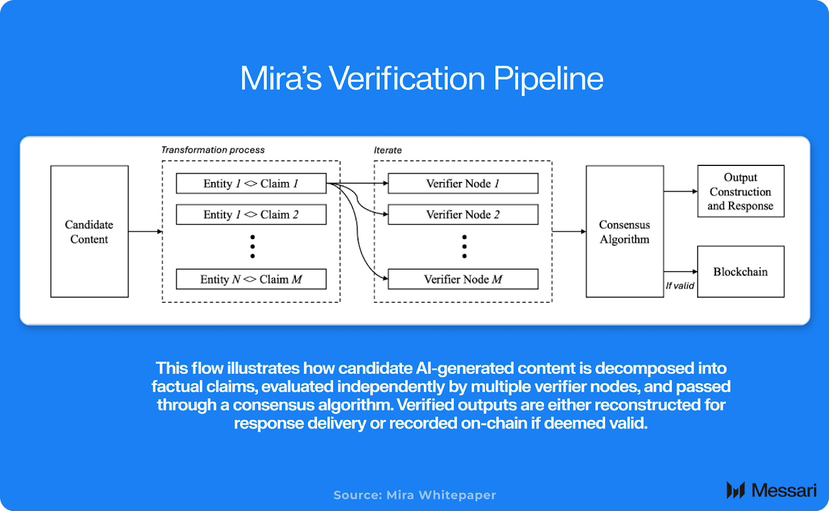

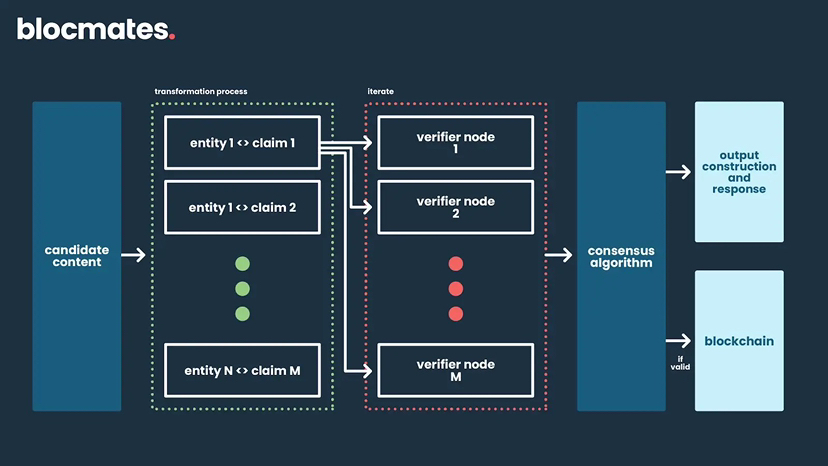

Mira’s architecture begins by breaking AI-generated content into atomic factual statements. Each statement is evaluated independently by a decentralized network of verifier nodes running diverse AI systems.

These verifiers assess claims and submit votes on their validity. Once a supermajority consensus is reached, the claim is either approved or rejected. The process combines ensemble AI logic with blockchain coordination, allowing verification without retraining base models or relying on centralized moderation.

Key components include:

• Content Transformation: AI outputs are split into structured entity-claim pairs for granular validation.

• Distributed Verifier Network: Multiple independent models evaluate claims to reduce single-point bias.

• Cryptoeconomic Incentives: Participants stake the native token, with slashing mechanisms penalizing dishonest behavior.

• On-Chain Attestation: Verified results are recorded immutably, creating auditable proof of reliability.

Since launching its mainnet in September 2025, Mira has expanded integrations across decentralized infrastructure providers, positioning itself as middleware between AI systems and blockchain applications.

Benefits for AI Applications

Mira’s decentralized verification layer significantly enhances AI suitability for high-stakes use cases.

• Reduced Hallucinations: Consensus-driven validation can dramatically lower factual errors, improving reliability in financial modeling, legal automation, and medical analysis.

• Bias Mitigation: A diverse network of verifier models helps balance systemic biases present in individual AI systems.

• Trustless Scalability: Developers can deploy autonomous agents without relying on centralized trust assumptions.

• Aligned Incentives: Token-based staking ensures that validators are economically motivated to maintain accuracy and integrity.

By converting output validation into a market-driven process, Mira shifts AI risk from blind trust to measurable, incentivized verification.

Background and Tokenomics

Mira Network was founded to address AI’s trust deficit at scale. The native MIRA token underpins staking, governance, and transaction fees within the ecosystem. Exchange listings, including availability on major platforms such as Binance, have expanded liquidity and visibility.

Tokenomics are structured to support long-term validator participation and ecosystem sustainability as adoption grows across AI-driven sectors.

Industry Implications and Reactions

As autonomous AI agents increasingly manage capital, execute trades, and interact with real-world systems, verification infrastructure becomes critical. Without safeguards, hallucinations could translate directly into financial losses or systemic risks.

Industry observers describe Mira as foundational infrastructure for the emerging AI economy. By enabling verifiable intelligence, it provides a safeguard layer that allows autonomy without sacrificing accountability.

Challenges remain, including scaling verifier participation and aligning with evolving regulatory frameworks. However, Mira’s decentralized design offers resilience and neutrality that centralized verification models cannot easily replicate.

As AI systems move from assistive tools to autonomous decision-makers, trust will become the defining factor in adoption. Mira Network positions itself as a foundational trust layer for the next generation of intelligent applications.

If its decentralized verification model continues to mature, it could play a pivotal role in transforming AI from probabilistic output generators into systems capable of delivering provable, auditable intelligence across industries in 2026 and beyond.