Most blockchains chase flashy innovations like ultra fast transactions or zero knowledge proofs. Mira Network feels like it wants to quietly disappear into the background, earning our indifference by turning AI into something predictably accurate, like the air we breathe essential but unnoticed.

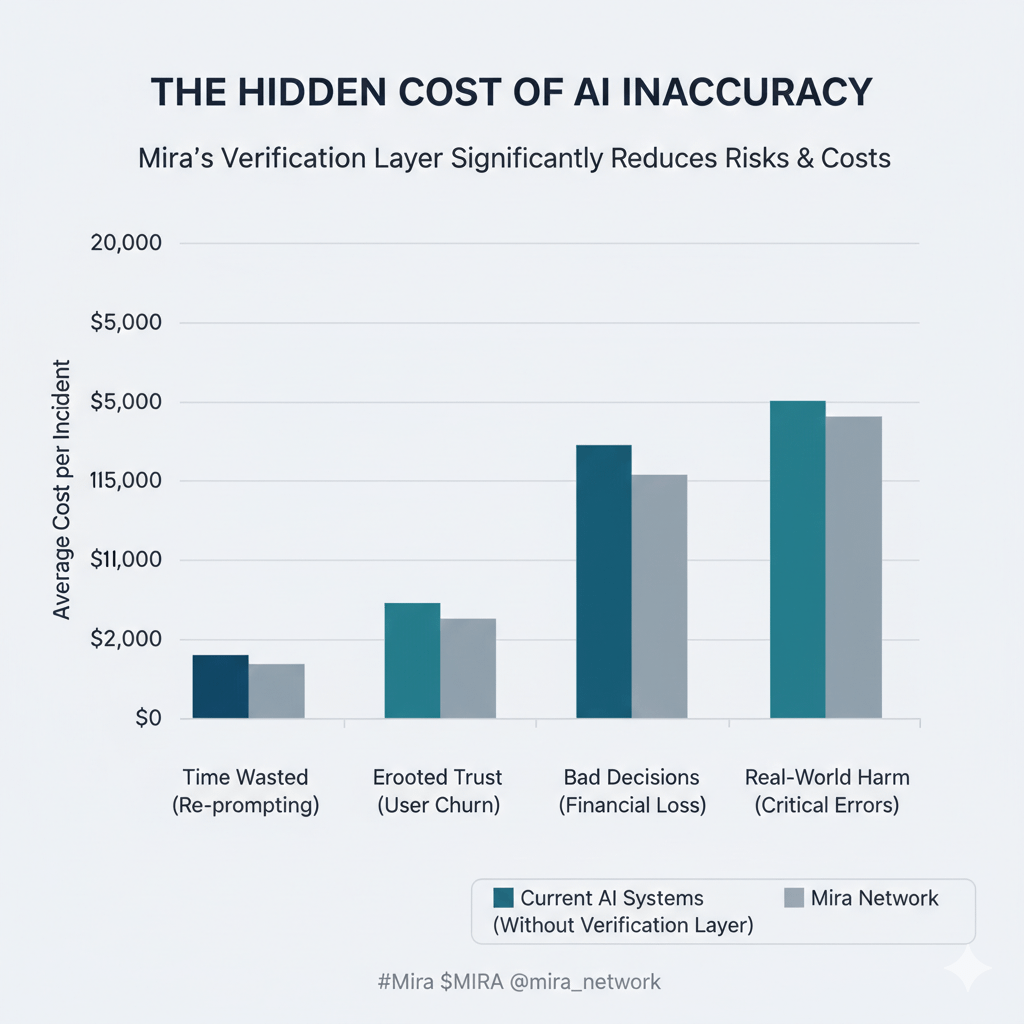

When I first started looking closely at Mira, what stood out wasn’t the common buzzwords around "decentralized AI" or endless scalability debates. It was the project's quiet obsession with the human toll of AI's flaws. We've all felt it: that nagging doubt when an AI spits out a confident but wrong answer, forcing us to double check, re prompt, or abandon it altogether. In high stakes moments like a doctor relying on diagnostic suggestions or a financial advisor parsing market insights the cost of inaccuracy isn't just time wasted; it's eroded trust, bad decisions, and real world harm. Mira reframes this as a foundational problem, arguing that unverified AI outputs create invisible friction, holding back mass adoption. The idea that really clicked for me was treating AI not as an infallible oracle but as a fallible tool needing a trust layer, much like how blockchains secured finance from central failures.

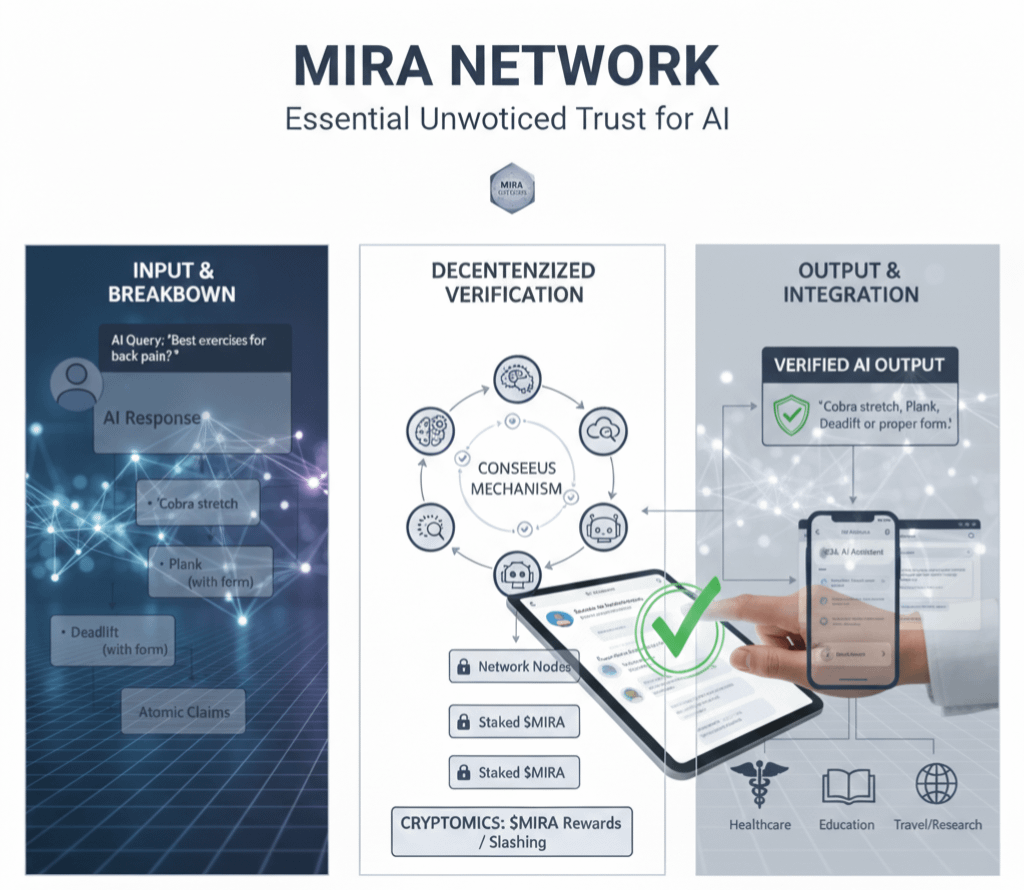

Diving deeper, Mira's core mechanics build this layer methodically. First, it breaks down AI responses into "atomic claims" small, verifiable units of information, stripping away the holistic output to scrutinize each part. Then, a decentralized network of nodes, each running diverse language models, cross verifies these claims through consensus, weeding out hallucinations, biases, and inconsistencies. Finally, cryptoeconomic primitives like staking incentivize honest participation: nodes put skin in the game, facing slashes for dishonesty, ensuring the system self polices without a central authority. It's boring but brilliant relying on collective intelligence over singular genius.

This ties into a growing ecosystem where reliability becomes seamless. Developers can integrate Mira via an OpenAI compatible API, embedding verified AI into apps without users sensing the underlying blockchain. Their flagship product, Klok, exemplifies this: it's an everyday AI assistant where queries yield consensus backed answers, eliminating the hesitation of "Is this right?" Imagine chatting with an AI for travel plans or research, free from the immersion breaking doubt of errors. Beyond Klok, Mira envisions broader adoption in sectors like healthcare, where inaccurate outputs could mean misdiagnoses, or education, where flawed info misleads learners.

Stepping back, there are honest tradeoffs. Mira's emphasis on robustness trades raw efficiency for thoroughness verification might add latency or slight costs in high volume scenarios, and it depends on a diverse enough model pool to avoid groupthink biases. In a world chasing speed, this could feel like a step sideways, especially if node participation lags in early stages. Yet, that's the pragmatic skepticism Mira invites: reliability isn't free, but the alternative rampant AI errors is costlier.

If Mira succeeds, most users won’t even notice the blockchain or the verification hum; AI will just work, becoming background infrastructure like electricity lighting our homes without a flicker of doubt. That might be the most human strategy in crypto prioritizing quiet trust over spectacle, so we can finally lean on AI without looking back.